How to Build a Sales Call Prep Agent with Granola, Attio, and Google Calendar

TL;DR

- Using Scalekit's Agent Auth solution, we build a sales call prep agent that polls Google Calendar every 15 minutes, pulls prior meeting notes from Granola, retrieves active deals from Attio, and Slack DMs a structured 1-page prep brief to the AE 15 minutes before the call.

- Connecting four services (Google Calendar, Granola MCP, Attio, Slack) in one agent normally means managing four separate OAuth flows, token refresh cycles, and failure modes; all before writing a single line of business logic.

- This engineering pain (multi-auth complexity) is what makes this agent non-trivial to build. However, Scalekit's Agent connectors provide a single execute_tool() interface across all four connectors. No token management, no OAuth refresh logic in your code.

In this guide, you will build an agent that automates the sales prep process for AEs. The agent removes the need to jump between tools to manually gather context before every call. It automatically checks Google Calendar for upcoming meetings, pulls prior meeting history from Granola, retrieves deal context from Attio, and delivers a structured prep brief to the AE via Slack 15 minutes before the call.

If you've been building multi-service agents, you would know that every agent that touches more than one service runs into the same wall: authentication. Before you can read a calendar event, query Granola, or look up a deal, you need four separate auth implementations. Each has its own token format, refresh schedule, and silent failure mode. Most agent projects stall out here.

This guide builds a fully operational call prep agent and shows exactly how Scalekit's connector layer lets you skip the auth plumbing entirely. Scalekit connects Google Calendar, Granola, Attio, and Slack through a single interface, so everything runs without managing multiple auth flows.

What Agent Engineers Need to Know

This agent reconstructs call context from three independent data sources that have no native connection to each other:

- Granola MCP holds prior meeting notes and transcripts, queryable by attendee email or company name. The MCP protocol adds a layer on top of standard OAuth: dynamic client registration, PKCE, per-user token isolation. Notes are scoped to meetings the authenticated user personally recorded; shared notes from colleagues are not accessible.

- Attio holds the deal record: stage, value, close date, last activity. Attio requires you to register your own OAuth app (no managed app fallback), exposes fine-grained scopes across 12+ resource types, and uses typed array attribute values — a format mismatch that causes silent failures if you assume flat record structures.

- Google Calendar provides the trigger. The agent filters external meetings from the primary calendar using attendee domain comparison. Google OAuth adds its own complexity: multi-day app verification, invalid_grant failures from five distinct token invalidation conditions, and RFC3339 formatting requirements that fail silently when violated.

Managing all three independently means building auth infrastructure before writing any pipeline logic. That's the part this guide solves for you.

How the Call Prep Flow Works Behind the Scenes

Once set up, the agent runs the same call prep flow for every upcoming meeting. Here is how context moves from a calendar event to a Slack brief:

- Calendar: Checks Google Calendar for external meetings scheduled in the next 30 minutes and filters out internal events by attendee domain.

- Granola: Queries past meetings using attendee's email and company name, retrieves recent notes, and fetches transcripts for the most relevant conversations.

- Attio: Looks up the associated deal using email or company domain and pulls stage, value, and recent activity.

- LLM: Combines meeting details, notes, transcripts, and deal data to generate a structured prep brief.

- Slack: Sends the final brief directly to the AE as a DM, including meeting details and key context.

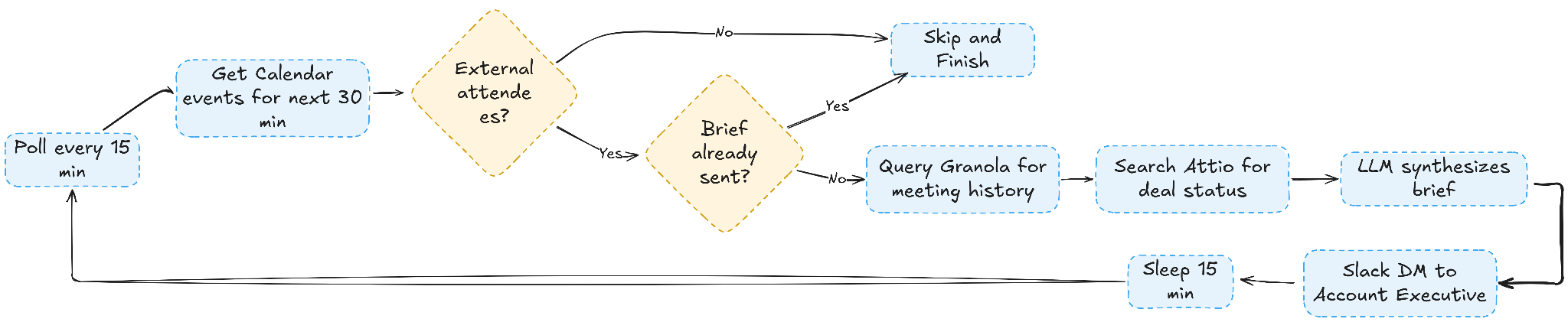

To make this more concrete, the next two diagrams break down how the agent decides when to generate a brief and how each service is called during a single run.

Agent Decision Flow

The diagram below shows how the agent determines whether a meeting qualifies for a prep brief, from calendar checks through deduplication and data retrieval to final delivery.

How Each Service Is Called

This sequence diagram shows how each service is invoked during a single run, from the initial auth check to the final Slack message being sent.

What the AE Gets Before Every Call

With this Sales Call Prep agent, the AE starts every call with a detailed brief with all the intel that's there in Granola and Attio, without wasting time and resources on manual resource retrieval and processing. Every section in the brief is grounded in actual data from Granola and Attio, so the AE is not relying on guesswork or incomplete notes. Here's an example:

Prerequisites

Before starting, confirm the following are in place:

- A Scalekit account (free tier is sufficient) and Claude Code installed

- Google Calendar with at least one upcoming external meeting

- Granola was installed with prior meeting notes recorded

- Attio with at least one deal linked to a person record

- A Slack workspace where you can receive DMs

- An OpenRouter API key with a non-free model such as openai/gpt-4o-mini

- Python 3.11 or newer

Project Setup

The project is one file: run_flow.py. Clone the repo and install dependencies:

Create a .env file in the project directory. All values come from your Scalekit dashboard and connected services:

Note: SLACK_CONNECTOR and GRANOLA_CONNECTOR must match the connector names in your Scalekit dashboard exactly. The connector name also sets the tool name prefix. A connector named granolamcp exposes tools named granolamcp_query_meetings and granolamcp_get_meeting_transcript.

Why Authentication Is the Hardest Part of Multi-Tool Automation

This workflow connects four separate authentication systems. Each has its own token format, refresh schedule, and failure mode.

- Google Calendar uses OAuth 2.0 with tokens that expire and must be refreshed. Handling the refresh cycle and storing tokens securely requires significant plumbing before you can read a single calendar event.

- Granola MCP uses the MCP protocol, which requires session management on top of standard auth. It is not a simple REST API with a static key.

- Attio has its own OAuth token lifecycle, running independently of Google's two separate refresh cycles, to be managed in parallel.

- Slack requires the bot token scopes configured correctly in the Slack App dashboard. A misconfigured scope fails silently or with a cryptic error.

Managing all four independently means building token storage, refresh logic, scope validation, and error handling for each service before writing a single line of pipeline logic. This is where most agent projects stall out.

How Scalekit Handles Auth Across All Four Connectors

Scalekit provides a unified authentication layer for agent workflows that operate across multiple services. It removes credential management from the application layer entirely.

Rather than implementing four separate auth flows, Scalekit gives you three concrete advantages:

- One setup, persistent connections. Connectors stay active across all pipeline runs. The first run triggers OAuth. Every subsequent run proceeds directly to ACTIVE.

- One call pattern across all services. All API calls across all four services go through one interface: connect.execute_tool(), the same regardless of which connector is being used.

- Zero token management. Token refresh, scope management, and connection state are handled automatically inside Scalekit. The agent never hits a stale credential mid-run.

How to Set Up Your Connectors in Scalekit

Set up all four connectors before writing any code so the pipeline can be tested end-to-end from the very first run.

Step 1: Create Your Scalekit Account

Go to scalekit.com and create a free account. Create a new workspace for this project. Your workspace generates a SCALEKIT_ENV_URL, SCALEKIT_CLIENT_ID, and SCALEKIT_CLIENT_SECRET. Copy these to your .env file.

Step 2: Add the Google Calendar Connector

In the Scalekit dashboard, navigate to Agent Auth > Connections, search for Google Calendar, and add it. Select the calendar.readonly scope for listing events.

Step 3: Add the Granola MCP Connector

Add the Granola MCP connector and configure it with your Granola instance. Scalekit handles the MCP protocol negotiation and session state, so your code only needs a single execute_tool() call.

Step 4: Add the Attio Connector

Add Attio with scopes for reading people and deals. Scalekit manages the Attio OAuth token lifecycle alongside Google's, with a single consistent interface.

Step 5: Add the Slack Connector

Add Slack with the chat:write scope to enable sending DMs.

Setting Up Auth with Claude Code

Scalekit provides a Claude Code plugin that automatically bootstraps the full auth scaffold, with no manual OAuth flows, token refresh logic, or scope management.

Run these two commands in your Claude Code terminal to install the Scalekit authentication plugin:

Once installed, give Claude Code the following prompt:

Claude Code generates the client initialization, the CONNECTOR_USERS mapping, and the ensure_authorized() and tool() helpers that the rest of the pipeline calls.

Client and connector map initialize the Scalekit client with your workspace credentials and maps each service to the identity it should act on behalf of. Every execute_tool() call downstream uses this map to route requests to the right connected account.

The tool() helper is the single call pattern used across every connector in the pipeline. It wraps execute_tool() with the connector name, user identity, and input parameters so every service interaction follows the same three-line structure regardless of which API is being called.

The ensure_authorized() startup check runs once on agent startup to verify every connector is in the ACTIVE state before the pipeline begins. If any connector hasn't been authorized yet, it generates a magic link on the spot so you can complete OAuth without touching the Scalekit dashboard directly.

On the first run, Scalekit prints a magic link for any connector not yet authorized. Click the link, complete OAuth once, and every subsequent run proceeds directly to ACTIVE without prompting again.

The Five-Step Call Prep Flow

Step 1: Checking Google Calendar for Upcoming External Meetings

The agent queries Google Calendar using googlecalendar_list_events with a 30-minute lookahead window:

The agent filters to meetings with external attendees by comparing email domains against the AE's domain and skips any event starting in fewer than 15 minutes:

Events that have already been briefed are tracked in _sent_event_ids. The same brief is never sent twice for the same meeting.

Step 2: Pulling Past Meeting Notes from Granola

For each qualifying meeting, the agent queries Granola by attendee email and by company name. Running both queries catches meetings where the contact is the same, but the search string differs:

Transcripts are fetched for the two most recent meetings to give the LLM the richest possible view of the last conversation:

If Granola returns no prior meetings, the brief still generates — the LLM treats it as a first call and suggests discovery questions instead.

Step 3: Looking Up the Deal in Attio

The agent searches Attio by company domain rather than filtering by person ID, which avoids Attio's complex nested filter syntax:

The parsed deal includes name, stage, value, and last activity date. If no deal is found, the LLM is informed and adjusts the brief accordingly.

Step 4: Synthesizing the Brief with an LLM

With Granola notes, transcripts, and Attio deal data assembled, the agent sends a structured prompt to OpenRouter:

Use openai/gpt-4o-mini as the default model. It is fast, costs under a cent per brief, and reliably follows the structured output format.

Step 5: Sending the Brief to Slack

The agent formats the brief and DMs it to the AE using slack_send_message. Pass a Slack user ID directly as the channel parameter to open a DM:

SLACK_DM_USER is the AE's Slack user ID (a U... string found under Profile > Copy member ID). The brief always goes directly to the AE, never to a channel or group.

How to Run the Full Pipeline

With all connectors active and environment variables set, run the agent:

Once running, the agent prints a live status update for every step:

The full pipeline for one meeting, including the LLM call, runs in under 30 seconds.

What to Check Before You Go Live

Google Calendar: The time_min and time_max parameters must be in RFC3339 format with a Z suffix (e.g., 2026-04-07T12:00:00Z). All parameters must be in snake_case; using camelCase causes the filter to be silently ignored, which returns all calendar events, including birthdays and past events.

Granola: The connector name in your Scalekit dashboard must exactly match GRANOLA_CONNECTOR. If you created it as granolamcp, tool names must use that prefix: granolamcp_query_meetings and granolamcp_get_meeting_transcript.

Attio: Use attio_search_records to look up deals by company domain query. Avoid filtering attio_list_records by associated_people. The nested filter syntax is non-obvious, and a text query search is simpler and equally effective.

Slack: Make sure SLACK_DM_USER is your Slack user ID from the workspace your connector is connected to, not from a different workspace. User IDs start with U. If you have multiple Slack connectors in Scalekit with the same identifier, delete the stale one with connect.delete_connected_account() before running.

Scheduling: For continuous operation, set POLLING_MODE=true or use a cron job to run every 15 minutes during working hours:

Conclusion

Before this agent, an AE handling 15 external calls a week spent around 3–4 hours on pre-call prep, switching between Granola, Attio, and other sources to assemble context before each call. That prep was inconsistent, often missed action items from earlier conversations, and did not scale as call volume increased.

After the agent runs, that prep cycle takes zero AE effort. A brief arrives in Slack 15 minutes before each call with prior meeting context from Granola, deal stage and history from Attio, a suggested agenda, and targeted open questions. It takes two minutes to read.

The same architecture extends naturally to other pre-call workflows. Account expansion calls can pull contract renewal data. QBRs can include product usage metrics. First calls can use Apollo enrichment instead of Granola history. Once the Scalekit connectors are in place and the execute_tool() pattern is established, adapting to a new data source means updating which tools are called and what the LLM prompt asks for — not rebuilding the auth and orchestration layer from scratch.

FAQ

Why use Scalekit instead of calling each API directly?

Each service has its own OAuth flow, token format, and refresh schedule. Managing them directly means building four separate auth systems before writing any pipeline logic. Scalekit collapses all of it into one execute_tool() call per service, working with auth in minutes, not days.

Does Scalekit handle token refresh automatically?

Yes. It checks expiry on every execute_tool() call and refreshes using the stored refresh token if needed. No refresh logic in your code, no stale credentials mid-run, no token state to manage between polling cycles.

Can I run this for multiple AEs on the same team?

Yes. Each AE gets their own connected accounts in Scalekit, identified by their email. Add one entry per AE to CONNECTOR_USERS and loop process_cycle() over them. Scalekit manages each AE's tokens independently — no credential sharing, no token collision.

What if Granola or Attio is unavailable during a run?

The brief still generates with whatever data is available. Granola down means no prior context; the LLM switches to first-call mode. Attio down means no deal data. Only Google Calendar is a hard dependency; if unavailable, the agent exits with a clear error.

How do I swap in a different CRM or notes tool?

The pipeline has three independent data slots: meeting notes, deal data, and attendee list. Replacing Granola means updating the Step 2 execute_tool() calls. Replacing Attio with Salesforce or HubSpot means updating Step 3. If your tool has a Scalekit connector, it's a field mapping change, not an auth rebuild.