Best Composio Alternatives for AI Agent Tool Calling (2026)

Composio’s pitch to agent-builders: 1,000+ integrations, managed OAuth, and adapters for every major framework. The value prop is to get started with tool calls real fast.

However, the real question is what "working" means at scale for production-grade agents in the real world; specifically, when your agent stops acting (tool calling) for you and starts acting for your customers.

Why Agent Connectivity Is a Distinct Infrastructure Problem

Most developers encounter this category when a framework integration stops being sufficient. Worth naming what you're actually solving before picking a product.

- Authentication is only half the problem. Storing and refreshing OAuth tokens per user is table stakes. The harder question is authorization: whether this agent, calling this tool, for this specific tenant, is actually permitted to do so — enforced at the infrastructure layer, not inside your agent's prompt. These are different problems. Both need solving in production.

- The execution gap is real. LLMs output a tool call. Something has to resolve the credential, verify scope, handle the rate limit, retry with backoff, and return a structured result the model can reason about. That execution layer is where most production failures happen, and where frameworks like LangChain stop and infrastructure begins.

- Multi-tenancy is an architectural decision, not a feature. In a B2B product, each customer has their own Salesforce, their own Slack, their own credential lifecycle. Cross-tenant credential isolation isn't something you add later. If it's not in the foundation, you're building toward an incident.

- Tool schema quality directly affects agent behavior. An agent presented with a poorly defined tool — wrong field names, ambiguous descriptions, too many optional parameters — will call it incorrectly or not at all. The difference between a tool definition and a good tool definition is non-trivial, and it compounds across every tool call your agent makes.

What Composio Gets Right

Composio earned its adoption. Be clear-eyed about this.

- Genuine catalog depth: 1,000+ integrations with working auth

- Fast time-to-first-tool-call: measurable in minutes, not days

- Framework adapters across LangChain, CrewAI, OpenAI Agents SDK, Autogen; no glue code

- Managed OAuth with token refresh handled

- SOC 2 Type II at the enterprise tier

For internal tooling, personal agents, early-stage prototypes: Composio is hard to beat on speed.

Where Composio Hits Its Ceiling

These are specific architectural constraints, not vague enterprise concerns.

- No per-tenant configuration. Every org, every user, gets identical tool behavior. There's no mechanism to configure different scopes, field mappings, or validation rules per customer. For single-tenant consumer apps, this is fine. For B2B products with heterogeneous customer environments, it's a design gap.

- Authentication without authorization. Composio handles AuthN — token storage and refresh. It doesn't enforce authz at the tool level: whether a given agent is permitted to call a specific connector, with a specific scope, for a specific org. In production multi-tenant deployments, those are different problems.

- Closed tools with no extensibility. Pre-built tools are black boxes. You can't inspect, fork, or modify them. When a tool doesn't map to your agent's needs — wrong semantics, wrong granularity — you build around it. That friction compounds as integrations become a core product differentiator rather than plumbing.

- Observability stops at the surface. No full API request/response visibility. No custom log messages. No OpenTelemetry export. When tool calls fail in production, debugging requires guesswork. That's a real operational tax on any team running agents at scale.

- Tool calls only. No data sync for RAG, no webhook triggers, no batch operations. Teams needing continuous context from external systems and action execution end up running a second platform alongside Composio.

The Alternatives

1. Scalekit

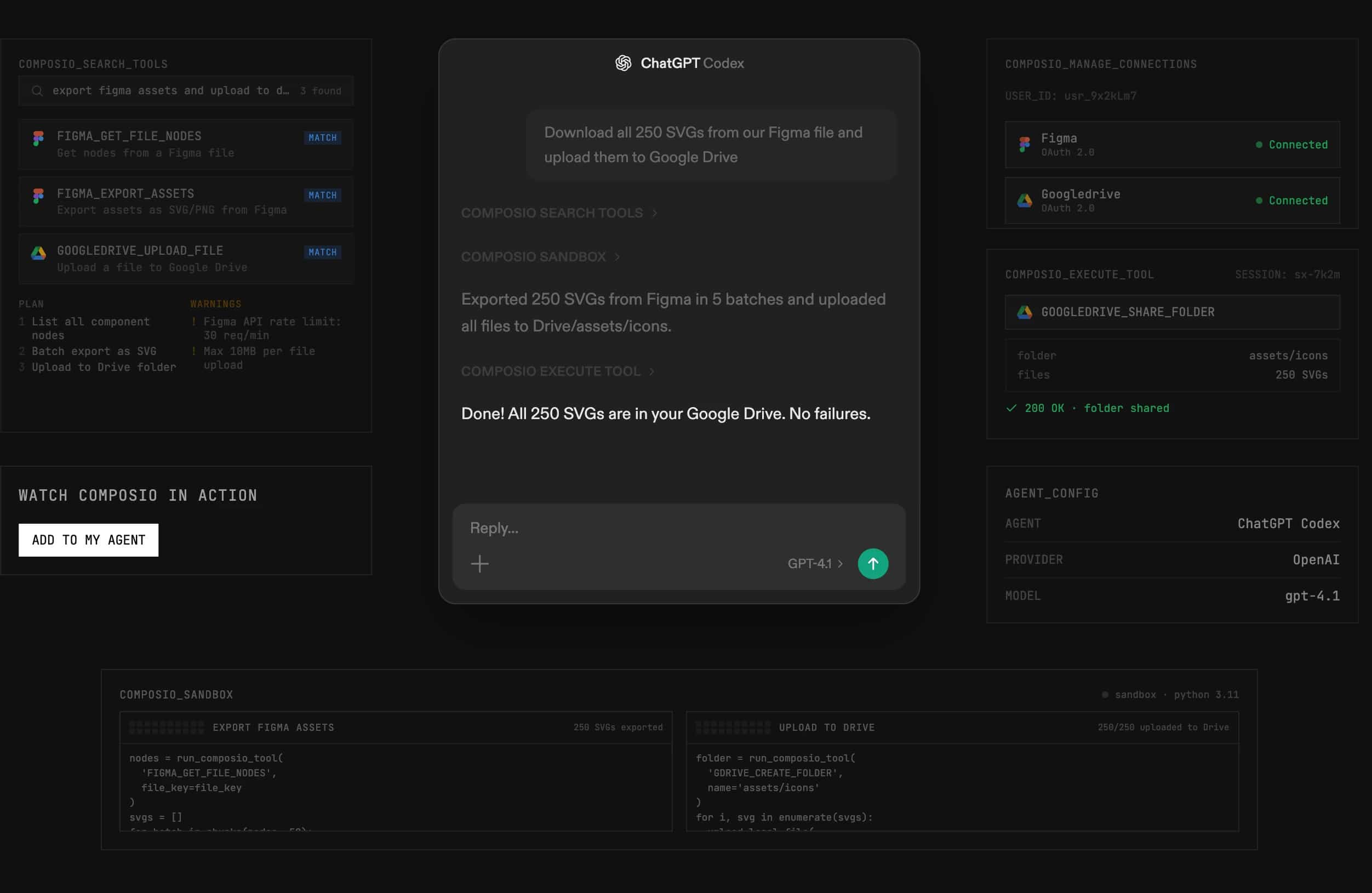

Scalekit was built around a premise the rest of this list is still catching up to: that production agent reliability is fundamentally an auth and AuthZ problem, and solving it properly means treating credential isolation and scope enforcement as the core architecture, not capabilities layered on after the integration catalog is built.

What production-grade looks like in practice:

Every tool call runs through a fixed sequence before touching an external API:

- Credential resolved from an AES-256 encrypted per-tenant vault; no raw token ever enters agent code

- Scope verified against per-connector, per-org configuration; not just "is this user authenticated" but "is this agent allowed to call this specific operation for this org"

- API call executed with auto-refreshed tokens; retries and rate limiting handled before the response surfaces to the agent

- Full delegation chain written to an audit log; agent ID, connector, scope, tenant, result — SIEM-exportable by default

The authz layer is worth pausing on. Per-connector scope configuration means you define, per integration, what each org's agents are permitted to do. A connector can be read-only for one customer, read-write for another, and fully restricted for a third, enforced at the infrastructure layer regardless of what the agent requests. This is what makes the architecture defensible in enterprise security reviews, not just functional in demos.

Connector depth over breadth:

The connector catalog is at ~300 connectors and growing. The design principle is deliberate: each connector is built to cover the operations agents actually need to complete tasks in production — not the minimum viable API wrapper. Field coverage, tool schema quality, error handling — all built to the standard that a customer's engineering team would build if they owned the integration themselves.

Beyond pre-built connectors, teams can bring custom tools, custom API endpoints, and custom MCP servers into the same auth and authz framework. The credential management and scope enforcement apply uniformly across pre-built and custom integrations alike.

Developer experience:

- Frameworks: Native adapters for LangChain, Google ADK, Anthropic, OpenAI, Vercel AI SDK, Mastra, Claude Managed Agents, OpenClaw — tool schemas returned in each framework's native format with no reshaping required

- MCP server: Expose any connected tool over MCP; per-user authenticated URLs generated by the platform

- Coding agent plugins: One-command install for Claude Code, GitHub Copilot CLI, Codex, and 40+ agents via the Skills CLI (npx skills add scalekit-inc/skills). The plugin gives your coding agent full API awareness — fewer hallucinations, faster implementation

- SDK: Python and Node.js, with full type coverage

Von — what this looks like in a real product:

Von is an AI revenue intelligence platform. Its agents act inside Salesforce, Gong, HubSpot, Google Drive on behalf of individual sales team members — updating records after calls, surfacing deal signals, preparing briefs before customer meetings.

The identity challenge: every tool call needed a valid, scoped token for that specific user. Across four systems. Kept current without human intervention. With no standing agent credentials. And with an audit trail that survives an enterprise procurement review.

"Von touches identity in four places: user auth, embedded SSO, token store for integrations, and an AI tool calling proxy. Having all of that managed by Scalekit behind the scenes is what let us ship fast without stitching together parallel systems." — Venu Madhav Kattagoni, Head of Engineering, Von

By early 2026 Von had added Zendesk, Snowflake, Gmail thread tooling, Gong Engage, Salesloft, and Outlook Calendar. Each new connector inherited the same auth and authz pattern without changing the identity layer. The team spent their engineering time on the revenue intelligence problem, not on token management.

One additional note for B2B SaaS builders: the team behind Scalekit's agent connectivity also builds enterprise identity infrastructure — SSO, SCIM, user and org management. Teams building the user-facing side of their product alongside agent features can run both on the same platform. Not a requirement, but it closes the gap between "who is this user in our product" and "what can their agent touch externally."

Best fit: Teams building production-grade agent products where per-tenant authz, reliable execution, and a defensible audit trail are requirements — not afterthoughts.

2. Nango

Nango's bet is code ownership over pre-built convenience. Tool definitions are TypeScript functions that live in your repo. You write them — or have Claude Code or Cursor write them — deploy to Nango's runtime, and Nango handles execution, auth, retries, and rate limiting.

Where it excels:

- Full control over tool semantics — the function does exactly what your agent needs

- 700+ APIs with managed auth, usage-based pricing that doesn't scale with customer count

- Observability that's actually useful: full API request/response, custom log messages, OpenTelemetry export

- Open source — inspectable, forkable, self-hostable for teams with data residency requirements

- CI/CD-native — integration functions version-controlled alongside your application code

The dev loop is code-first throughout. AI coding agents can build and iterate on Nango integration functions the same way they'd work with any TypeScript.

The honest trade-off: You're authoring integration logic, not consuming pre-built tools. Higher ceiling; higher floor.

Best fit: Teams where integrations are a core product feature, code ownership is a requirement, and observability needs are high.

3. Arcade

Arcade was founded by executives from Okta — people who spent years at the center of how enterprise authorization actually works. That DNA shows throughout the product: agent authorization is the primary design concern, not a credential storage question.

The model: agents act as users through proper OAuth delegation — not bot tokens, not service accounts with org-wide access. The agent calls a tool; Arcade verifies the user's delegation, checks their permission scope, and executes with exactly what that user authorized. Nothing more.

What's well built:

- MCP-native runtime with production execution semantics

- Tool evaluation framework: CI/CD-style rubrics for testing tool calling behavior before deployment, a capability most of this category still lacks

- SDK for building custom tools and MCP servers on their runtime

- Clean developer experience; the local dev loop is fast

Current gaps:

- ~112 first-party integrations — meaningful catalog constraint for broad-coverage use cases

- Closed-source runtime engine creates vendor dependency at the execution layer

- Enterprise governance, i.e., org-level credential hierarchy, SIEM-exportable audit logs — still developing

- Production evidence at large-scale multi-tenant enterprise deployments is limited

Best fit: MCP-native builds where the auth model is the primary design concern and the current catalog covers your integration requirements.

4. Merge Agent Handler

Merge has a decade of integration infrastructure behind it and launched Agent Handler in October 2025 as a purpose-built agent layer on top. The governance features are genuinely well-built: PII scanning on request/response, rule enforcement per tool pack, granular audit logs, DLP controls.

For teams already running Merge Unified API, the path to Agent Handler is the shortest migration in this list.

The structural reality: Agent Handler sits on top of a unified API designed for deterministic SaaS integration code. That architecture normalizes data across providers into common schemas — valuable for consistency at scale, but it strips API-specific semantics that agents need for reliable tool use. And per-tenant tool configuration isn't supported: every customer gets identical tool behavior.

Pricing: $65 per linked account after the first 10. In a multi-tenant product with real customer count, this compounds.

Best fit: Teams already on Merge Unified API adding agent capabilities. Less suited to greenfield builds where the normalization abstraction is a constraint.

5. Paragon (ActionKit)

Paragon rebuilt around AI in 2025. ActionKit is a single API returning tool definitions across 1,000+ integrations, with a white-labeled Connect Portal for end-user authorization that's as polished as anything in this list.

Where it works: ISVs where the user-facing integration connection experience is a product requirement and integration breadth matters more than authz depth.

The constraint: Pre-built tools, limited per-tenant customization. The tool definitions reflect an embedded iPaaS heritage — optimized for developer ergonomics rather than LLM tool-calling semantics.

Best fit: ISVs building AI features where the end-user connection UX is a product differentiator.

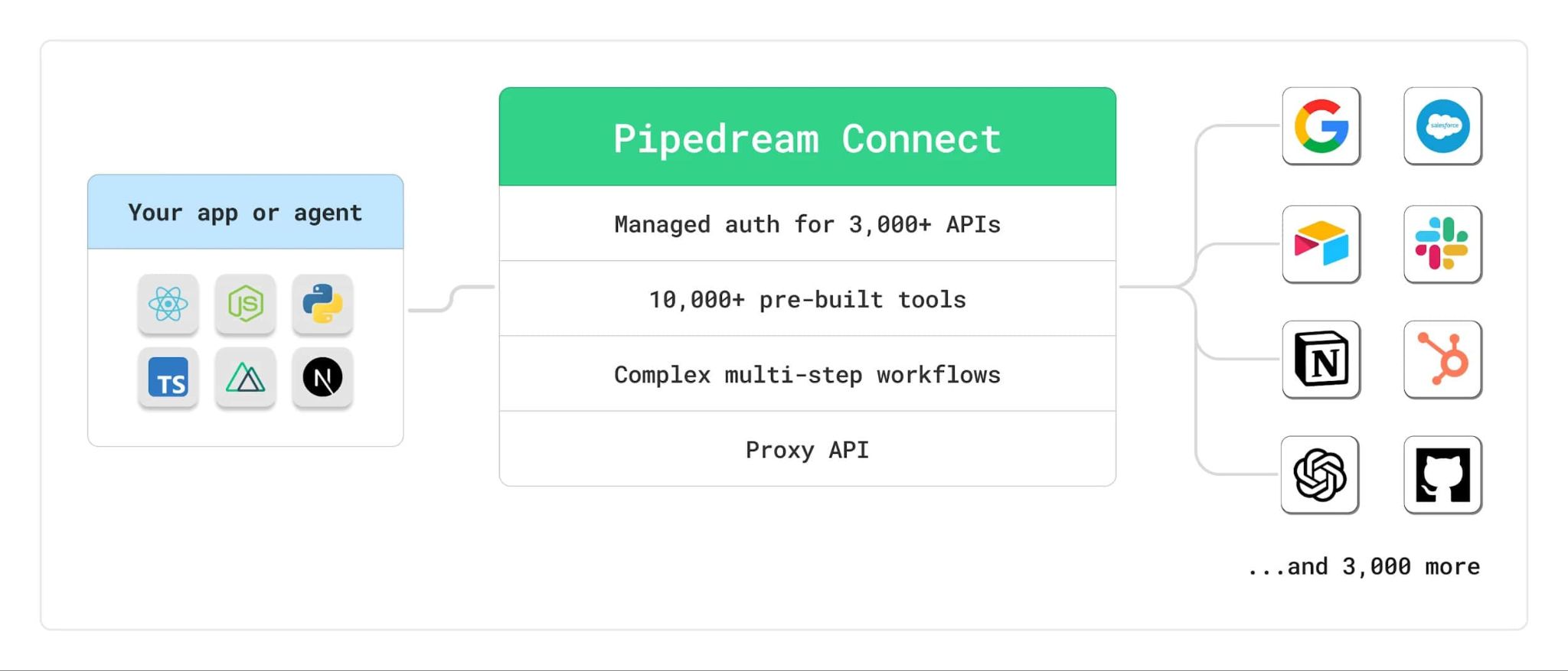

6. Pipedream

Acquired by Workday in November 2025. 3,000+ connectors, MCP server, per-invocation pricing that's developer-friendly at low-to-mid volumes. The breadth is real.

The strategic question is simple: Workday is integrating Pipedream into their enterprise AI platform. The roadmap for independent agent builder use cases is uncertain. That's a meaningful factor in an infrastructure decision with multi-year implications.

Best fit: Short-horizon projects where catalog breadth is the binding constraint.

How to Choose

The Decision Point

Composio's ceiling has a specific shape. It appears when your agent moves from acting for one user to acting for many — when "does the agent have credentials" becomes "is this agent authorized to act for this org, in this scope, in a way I can prove."

That's not a Composio failure. It's a design scope question. The question to answer before choosing is: which hard problems will your product actually face in production? Choose the tool that was built around those problems, not the one that handles them as an afterthought.

Frequently Asked Questions

1. When should I actually consider switching from Composio to an alternative?

The signal is usually specific: your agents start acting on behalf of multiple customers and you need per-tenant credential isolation, or your security team asks for an audit trail of every tool call, or a customer's procurement review requires proof of scope enforcement. If you're hitting any of these, you've crossed the line from "integration tooling" into "agent infrastructure," and that's a different product category than what Composio was designed for.

2. Which Composio alternative is closest to a drop-in replacement if I just need more integrations or better reliability?

Paragon's ActionKit is the closest in philosophy — broad catalog, pre-built tools, fast time-to-first-call. Pipedream offers a healthy amount of raw connector count. However, if reliability in a multi-tenant environment is the actual problem, neither is a true drop-in; you'd be trading one ceiling for another. Scalekit or Nango are better fits when the underlying issue is architectural rather than catalog-related.

3. Is Nango a good Composio alternative for teams that want open-source and full code ownership?

It's the strongest one if you are looking for full code ownership. Nango lets you write integration logic as TypeScript functions that live in your own repo, version-controlled and CI/CD-native. You get full API request/response observability, OpenTelemetry export, and the ability to self-host for data residency requirements — none of which Composio offers. The honest trade-off is that you're authoring integration logic rather than consuming pre-built tools, so the floor is higher even if the ceiling is much higher too. Plus, open-source has its own drawbacks, which you might already be aware of.

4. How does Scalekit differ from Composio beyond just having more enterprise features?

It's an architectural difference, not a feature checklist difference. Composio is built around integration breadth and fast onboarding — the credential and execution layer is a means to that end. Scalekit is built around the credential and authorization layer as the core product, with integrations on top. That means per-tenant scope enforcement, an encrypted credential vault with no raw tokens in agent code, and a full delegation audit log are structural properties of every tool call, not add-ons. For B2B agent products, that distinction matters more than the connector count.

5. Can I start with Composio and migrate to a more production-grade solution later?

Technically yes, but migrations are costly. The credential management patterns, multi-tenancy architecture, and tool schema designs you build early become deeply embedded in your agent logic. If you anticipate B2B customers, per-tenant authorization requirements, or enterprise security reviews within 12 months, it's worth building on the right foundation from day one rather than retrofitting it later.

6. What's the difference between authentication and authorization in the context of agent tool calling, and why does it matter?

Authentication answers "does this agent have valid credentials for this service?" Authorization answers "is this agent permitted to perform this specific operation, for this specific customer, at this point in time?" Most platforms solve the first problem well. The second — enforcing scopes per connector, per tenant, at the infrastructure layer — is where production incidents actually happen. If your agents act on behalf of multiple customers, you need both solved, not just one.

7. How does tool schema quality affect my agent's reliability in production?

Significantly. An LLM decides whether and how to call a tool based entirely on its schema: the field names, descriptions, and parameter definitions. Vague descriptions lead to incorrect calls; overly broad schemas lead to hallucinated parameters; missing fields cause silent failures. This compounds at scale: poor schema quality across 20 integrations means 20 sources of unpredictable agent behavior that are hard to debug and harder to fix without owning the tool definition itself.

8. Is MCP (Model Context Protocol) support a must-have when evaluating these platforms?

It depends on your stack. If you're building MCP-native agents or need to expose tools to coding agents like Claude Code or GitHub Copilot, native MCP support matters a lot as it eliminates a translation layer and keeps tool schemas clean. If you're building within a specific framework like LangChain or the OpenAI Agents SDK, native framework adapters may matter more. The key question is whether the platform returns tool schemas in your framework's native format, or forces you to reshape them yourself.