OAuth vs API Keys for AI Agents: Why Static Credentials Break in Production Systems

TL;DR

- API keys identify the calling application but do not model user delegation or consent. OAuth is explicitly designed to let a resource owner grant limited access to a client without sharing credentials.

- OAuth issues access tokens with explicit scopes and an expiration (exp) time, limiting privilege and exposure windows. Static API keys often remain valid until manually rotated, increasing the risk if they are leaked.

- OAuth defines token revocation and lifecycle management, enabling per-user or per-grant invalidation without breaking other tenants. API keys typically require global rotation.

- OAuth scopes constrain what actions a client can perform (e.g., contacts.read vs contacts.delete). Least-privilege access is a core security principle for modern systems.

- When AI agents act on behalf of users, systems must attribute actions to identities and enforce policy boundaries. OAuth embeds identity claims and supports verifiable authorization decisions per request.

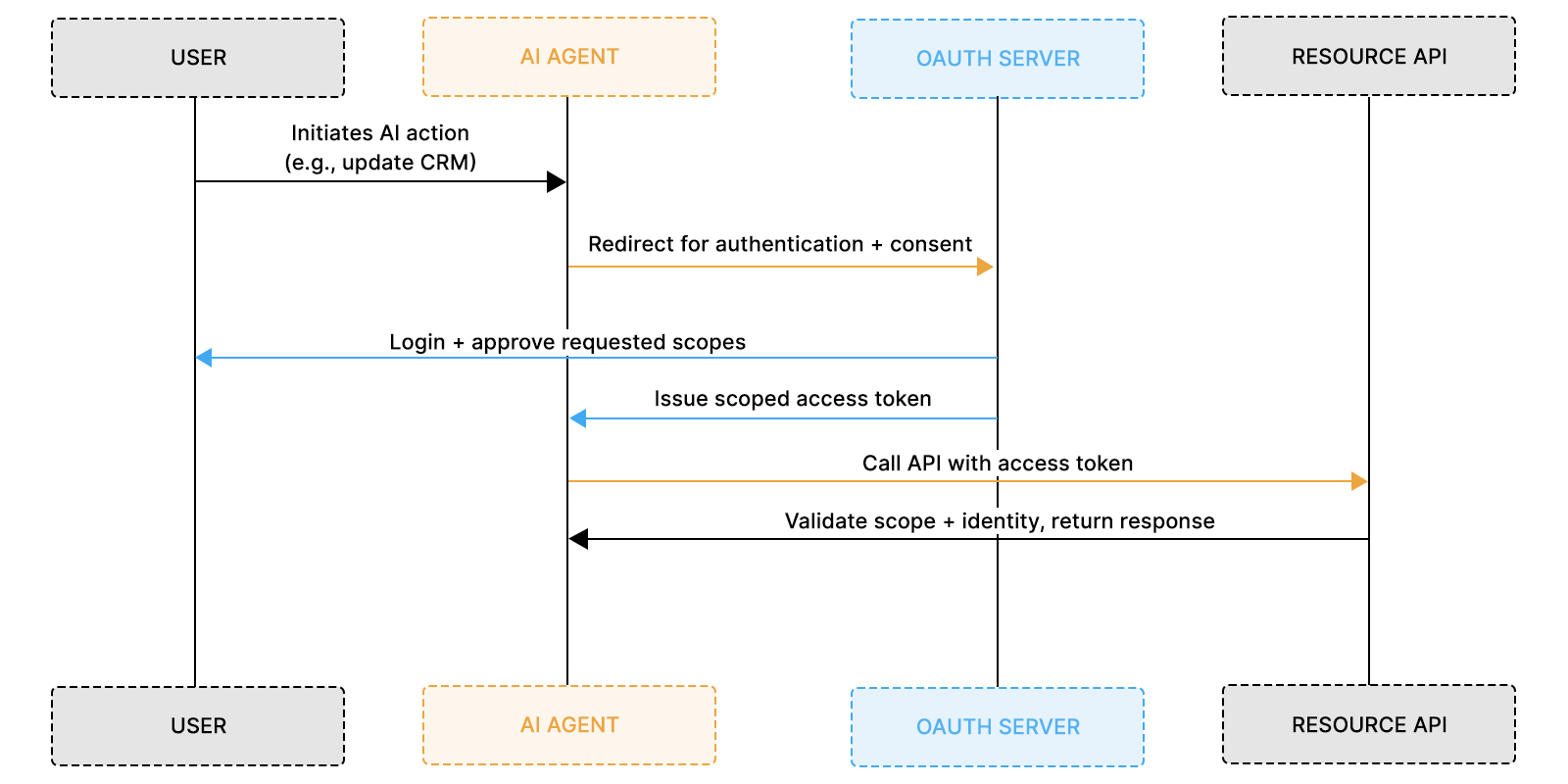

Production Systems Require Delegated Authorization

AI agents are moving from assistants to autonomous operators. They update CRM records, adjust billing entries, sync ticket metadata, and trigger downstream automation across SaaS systems.

Early integrations often rely on API keys because they are simple. A typical implementation looks like this:

This model authenticates the application, not the user. The AI agent uses a single static key stored in environment variables. Every request appears identical to the API.

In staging, this works. In enterprise environments, it fails.

Enterprise security reviews require per-user attribution and revocation controls. Reviewers ask:

- Which user authorized this action?

- Can access be revoked for one employee?

- How are actions audited per tenant?

API keys cannot answer these questions. They authenticate the service as a whole.

Delegation becomes mandatory at production scale. When AI agents act on behalf of users, the system must bind each request to identity, consent, and permission boundaries. This requirement separates demo architectures from production-ready systems.

API Keys Simplify Authentication but Eliminate Delegation

API keys prove that a calling application is trusted. They do not express who the application represents. In single-tenant or internal systems, this limitation may be acceptable. Trust boundaries are shared, and identity is often implicit. In multi-tenant SaaS systems, identity boundaries are isolated. An AI agent may operate across thousands of tenants, each with distinct users and permission models.

A static API key collapses these boundaries. Every request appears identical to the resource server. The API cannot distinguish:

- Which tenant initiated the request

- Which user authorized the action

- Which permissions apply

The core limitation is structural, not accidental. API keys lack a delegated authorization model. AI agents represent users. That representation must be verifiable, scoped, and revocable. API keys cannot encode those properties.

Recommended Reading: API authentication in B2B SaaS: Methods and best practices

Delegated Authorization Becomes Mandatory for AI Systems

Delegation formalizes who the AI agent represents. Every delegated interaction involves three actors:

- The user (resource owner)

- The AI agent (client)

- The resource server (API)

When an AI agent updates a CRM record, the system must evaluate:

- Which user authorized the action

- Which tenant policy applies

- Which permissions are allowed

OAuth encodes this relationship into the access token. A scoped token binds identity, consent, and permissions into every request.

Example token claims:

The resource server validates identity and scope before execution. Delegation is not an enhancement over API keys. It is a prerequisite for AI systems that modify user-owned data in multi-tenant environments.

Recommended Reading: OAuth for AI Agents: Production Architecture and Practical Implementation Guide

API Keys Provide No Scoped Consent Controls

Scoped consent limits what an AI agent is allowed to do inside a customer’s environment. In production SaaS systems, permissions are rarely binary. A user may have read access to contacts but not permission to modify billing data. An AI agent acting on behalf of that user must inherit those same boundaries.

API keys do not support scoped consent. A key typically grants full API access associated with the issuing account. Once the agent holds the key, it can read, write, update, or delete resources depending on what that key allows. There is no built-in mechanism to restrict the agent to contacts.read while preventing contacts.delete. The authorization surface becomes all-or-nothing.

OAuth introduces explicit scopes into each access token. For example, a token may carry scopes such as:

The resource server evaluates each request against these scopes. If the agent attempts an action outside its granted permissions, the request fails. Scoped tokens reduce blast radius and align the agent’s authority with the user’s policy constraints.

API Keys vs OAuth Scoping Model

Scoped consent is not just a compliance feature. It directly constrains what an AI system can mutate in production. When AI agents transition from read-only assistants to autonomous actors, scope enforcement becomes foundational.

API Keys Cannot Support Safe and Granular Revocation

Revocation defines how quickly access can be contained when something changes. In production SaaS systems, users leave organizations, roles change, credentials are exposed, and integrations must be disabled without disrupting other tenants. An authorization model must support targeted revocation.

API keys do not offer granular revocation. If an AI agent uses a single static key to access a CRM or billing API, revoking that key disables the integration for all users and tenants that depend on it. There is no mechanism to revoke access for a single employee or a single tenant without rotating the entire credential. This creates operational risk and discourages timely revocation.

OAuth introduces revocable, short-lived tokens tied to user identity and consent. If a user leaves an organization, their access token can be invalidated without affecting other users. If a tenant disables the integration, the authorization grant can be revoked independently. Token lifetimes, refresh token rotation, and consent withdrawal provide containment boundaries that API keys cannot enforce.

Revocation Behavior Comparison

Revocation is not only about security hygiene. It determines how resilient an AI system is under real-world operational changes. When AI agents operate across multiple tenants and user roles, the ability to revoke narrowly and predictably becomes part of the system’s reliability model.

API Keys Provide No Auditability for Autonomous AI Actions

Auditability answers a fundamental question: who performed an action, and under which authority? In enterprise environments, every state-changing operation must be traceable to a specific identity and permission boundary. When AI agents begin modifying records, triggering workflows, or updating customer data, this traceability becomes a non-negotiable requirement.

API keys do not encode user identity or delegation context. Logs typically capture only the credentials used to authenticate the request, for example:

This confirms that the application performed an action, but it does not clarify which user initiated the workflow, which tenant context applied, or which permission scope authorized the change. The attribution layer is missing.

OAuth access tokens embed structured identity claims that preserve this context. Common claims include:

- sub (user identifier)

- tenant_id

- scope

- iss (issuer)

- jti (token identifier)

Structured logging can then record user identity, tenant, agent name, scope, and timestamp for every request. This creates a verifiable chain of authorization. Autonomous AI systems require this level of attribution to support compliance, incident response, and governance. Without a delegated identity embedded in each request, accountability breaks down.

Production Failure Patterns When AI Agents Rely on API Keys

API key architectures tend to break under enterprise constraints. What works in staging environments begins to fail once AI agents operate across users, tenants, and real business workflows. In production systems, three recurring failure patterns emerge.

1. Over-Privileged Automation

A single API key often receives broad or full account access because it is easier to configure. Over time, small experiments expand into production responsibilities. Read-only integrations evolve into write operations, record updates begin affecting multiple tenants, and automated workflow triggers multiply.

Because the key is application-scoped rather than user-scoped, privileges are implicitly expanded. There is no mechanism to constrain authority per user or per action. The AI agent gradually accumulates more power than originally intended, without explicit authorization boundaries.

2. Enterprise Security Review Blockers

Enterprise customers evaluate authorization models rigorously. Security reviews require clear answers to questions about per-user attribution, revocation controls, and scope enforcement. They expect to see how identity is preserved across every state-changing action.

API key-based systems cannot demonstrate delegated authority. Every action appears to originate from a shared credential rather than a specific user or tenant. Teams are forced to retrofit identity controls late in the sales cycle or delay enterprise deals while re-architecting their authentication model. In this context, identity becomes a revenue blocker.

3. Blast Radius Amplification

When an API key is leaked or misused, exposure typically spans the entire integration surface. All tenants who rely on that key are affected, and every permission attached to it becomes accessible. There is no built-in containment boundary.

OAuth limits this blast radius through scoped, short-lived tokens and per-user grants. If a token is compromised, exposure is constrained by its scope and expiration window. An isolated token incident under OAuth becomes a systemic risk under an API key model.

These patterns are not edge cases. They are structural outcomes of using static, application-wide credentials in systems where AI agents act on behalf of users.

OAuth Flows That Map Cleanly to AI Agent Architectures

OAuth supports multiple delegation patterns that align with how AI agents operate in production systems. The correct flow depends on whether the agent acts on behalf of a user, operates as a backend service, or propagates identity across internal services. Choosing the wrong flow recreates API key limitations under a different protocol, so the architectural intent must be explicit.

The Authorization Code Flow is the primary model for user-facing AI agents. In this flow, the user explicitly authenticates and grants consent before the agent receives a scoped access token. That token binds user identity, tenant context, and permission scopes into every API request. This preserves delegation and ensures the resource server evaluates each action against policy.

The Client Credentials Flow applies when no user is present. Background jobs, internal automation agents, or system-level orchestration components may authenticate as service identities. This flow still provides short-lived tokens and revocation control, but it does not represent user authority. For AI agents that mutate user-owned data, delegated flows are required.

This flow enforces three structural properties that API keys cannot provide. First, consent is explicit and user-bound. Second, scopes restrict the agent to only the permissions granted. Third, every request carries identity claims that the resource server can validate independently.

In more complex architectures, the Token Exchange (On-Behalf-Of) Flow extends this delegation across services. An orchestration layer can exchange a user-scoped token for another token usable by downstream APIs while preserving the original authority chain. This becomes important when AI agents coordinate multiple microservices without breaking identity continuity.

OAuth flows are not interchangeable configuration options. They encode authority boundaries. When an AI agent acts on behalf of users within multi-tenant SaaS systems, delegated flows are mandatory. When it acts as infrastructure, service-level identity may suffice. The architecture must reflect which role the agent is playing.

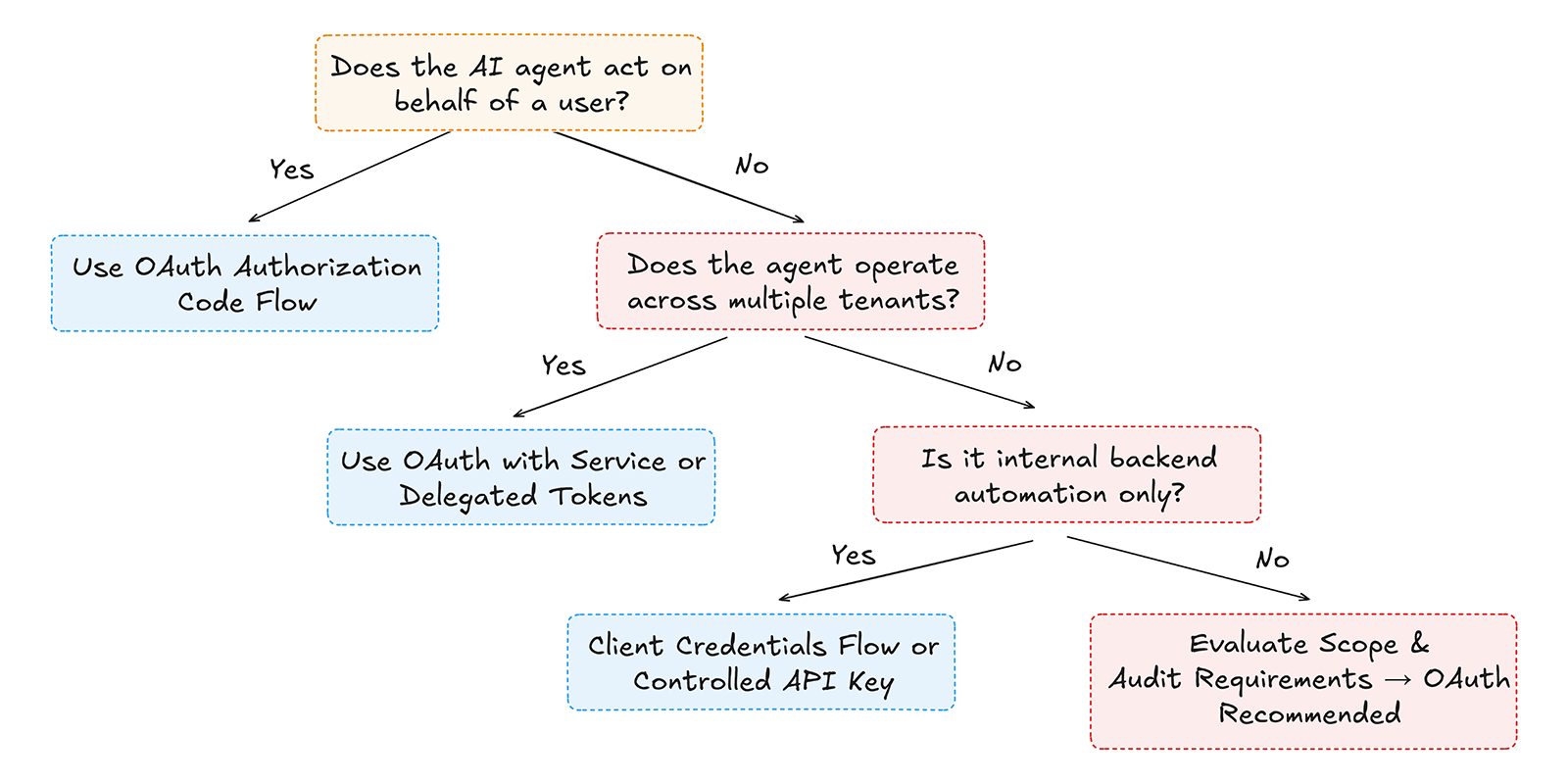

A Decision Framework for Choosing OAuth vs API Keys in AI Systems

The authentication strategy should follow authority boundaries. The key question is not which protocol is simpler, but who the AI agent represents when it performs an action. Every production AI system eventually needs to answer whether the agent acts as infrastructure or as a delegated user.

If the agent mutates customer-visible data, operates inside multi-tenant SaaS environments, or must pass enterprise security reviews, delegation is required. If the agent operates purely as internal backend automation with no user-level context, service-level authentication may be sufficient. The distinction is architectural, not operational.

The decision can be evaluated using a simple branching model.

API keys are not inherently insecure. They are incomplete for delegated systems. When AI agents begin representing users rather than infrastructure, the authentication model must evolve accordingly.

As AI capabilities expand, more systems fall into the delegated category. That shift is why the discussion of OAuth vs. API keys for AI agentsis no longer theoretical; it is architectural.

Migrating from API Keys to OAuth in Production AI Systems

Migration from API keys to OAuth begins with modeling authority boundaries. The first step is to identify where the AI agent acts on behalf of a user and where it operates as infrastructure. Every delegated interaction must be explicitly mapped to a user identity, tenant boundary, and permission scope.

The second step is introducing scoped tokens without disrupting existing integrations. This typically involves adding an OAuth authorization layer, defining granular scopes such as contacts.read or tickets.write, and updating API calls to validate access tokens instead of static keys. Tokens should be short-lived, refreshable, and auditable. Logging should capture user identity and scope claims for each state-changing action.

The final step is implementing revocation and lifecycle controls. User offboarding should invalidate their grants without affecting other tenants. Tenant-level consent withdrawal should cleanly disable integrations. Token rotation policies should reduce long-term credential exposure. Migration is not merely about replacing credentials; it is about upgrading the AI system's identity model.

Practical Migration Checklist

- Identify user-delegated actions performed by the AI agent

- Define fine-grained permission scopes

- Introduce the OAuth Authorization Code flow for user-facing actions

- Replace static API keys with short-lived access tokens

- Add audit logging with user and tenant claims

- Implement token revocation and refresh rotation

Migration can be phased. Systems often begin with hybrid models, where internal automation continues to use service credentials while user-facing actions move to delegated OAuth flows. Over time, as AI autonomy increases, delegated identity becomes the dominant pattern.

A detailed technical walkthrough of this transition is covered in our migration deep dive.

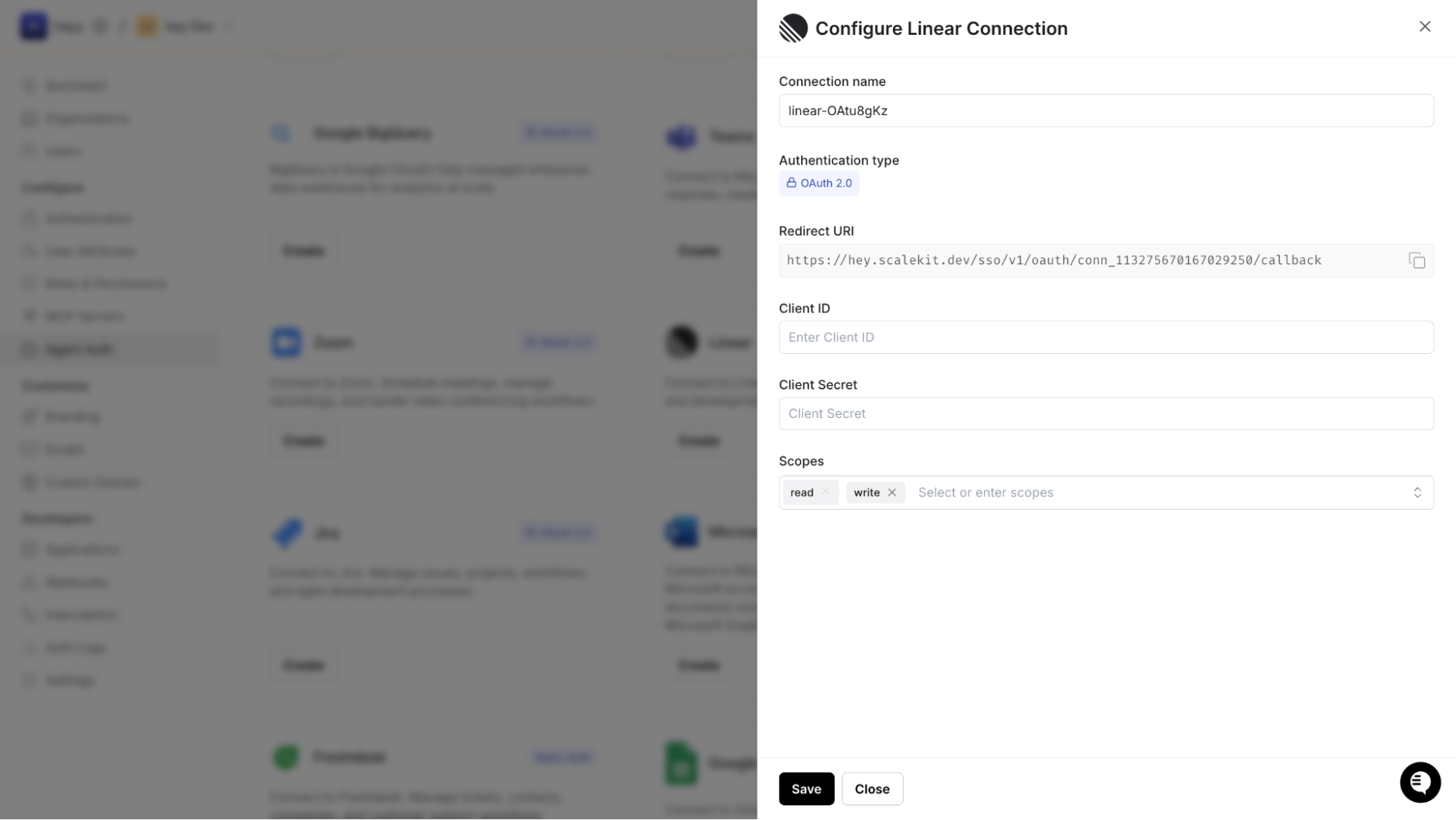

Implementing Delegated OAuth for AI Agents with Scalekit

Scalekit operationalizes delegated OAuth for AI agents by centralizing scope modeling, token lifecycle management, and grant storage. Instead of distributing static API keys across services, you configure an OAuth application inside Scalekit that represents your AI agent and defines its authority boundaries.

When registering the agent application, you configure:

- Redirect URIs

- Allowed grant types

- Allowed scopes

- Token lifetimes

- Tenant isolation rules

These settings define the maximum authority the AI agent can request. Unlike API keys, which provide blanket access, this configuration establishes enforceable runtime boundaries.

Step 1: Model Agent Capabilities Using Scopes

Scopes in Scalekit represent real operational capabilities. If your AI agent reads contacts and updates tickets, the scopes might include:

- contacts.read

- tickets.update

- tickets.comment

- billing.view

These scopes are attached to the application and cannot be exceeded at the time of authorization. The AI agent may request only the scopes defined in its configuration, preventing privilege escalation as capabilities evolve.

This directly addresses the limitation on scoped consent discussed earlier. The authorization surface is explicit and constrained.

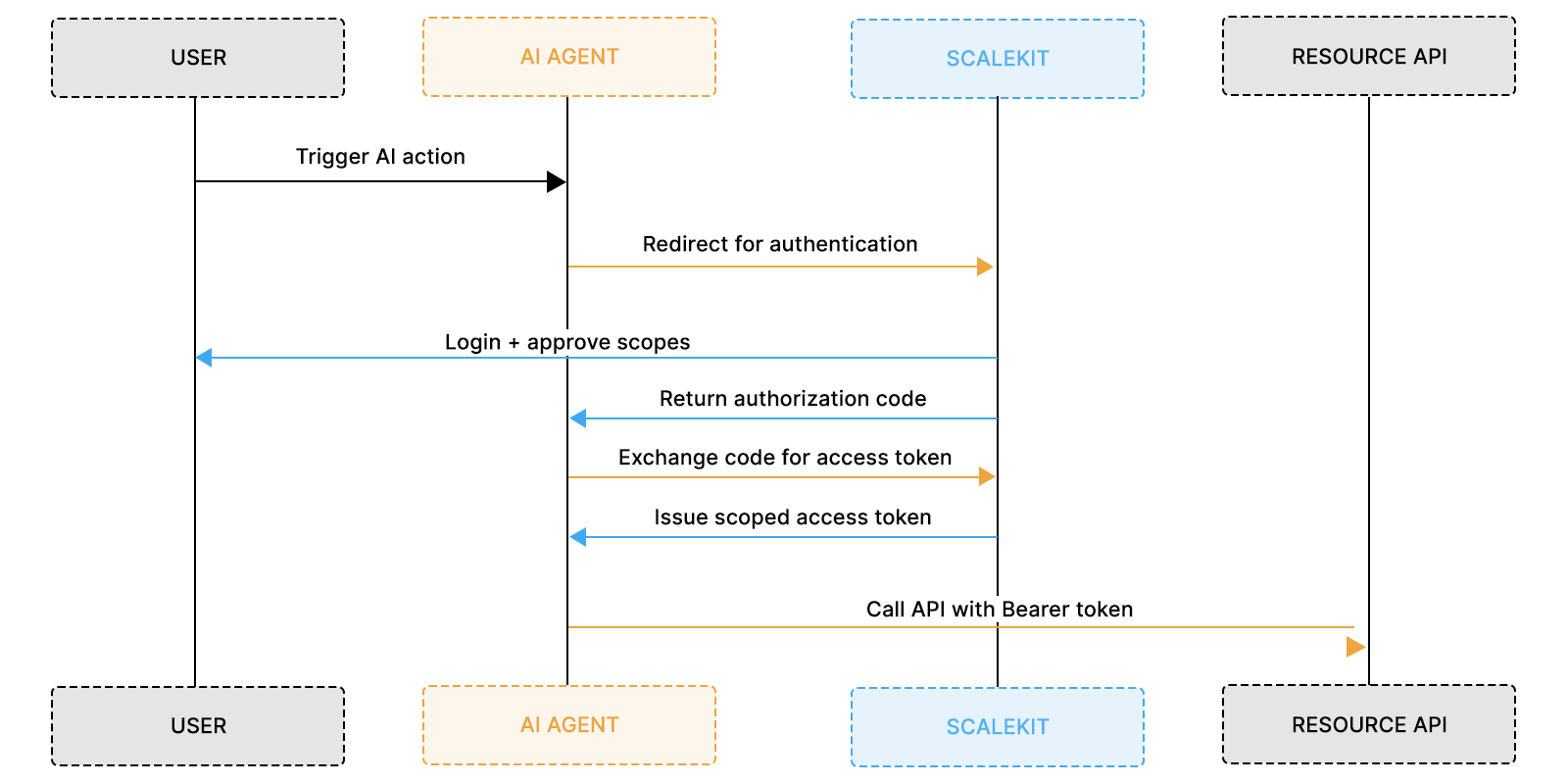

Step 2: Execute Authorization Code Flow for User Delegation

When a user triggers an AI action, the agent initiates the OAuth Authorization Code flow via Scalekit.

The issued token contains:

- sub (user identifier)

- tenant_id

- scope

- exp

- Signature for verification

This token binds identity, tenant context, and approved capabilities into a single verifiable artifact. Every request made under this token represents delegated authority, not application-wide access.

At this stage, delegation is formally established.

Step 3: Scope Enforcement and Execution Model

When using Scalekit-managed integrations, scope enforcement happens before the provider API call is executed. The AI agent invokes an integration action through Scalekit, and Scalekit validates:

- The connection (grant) is active

- The requested action is permitted by scope

- The token is valid and unexpired

Only after these checks is Scalekit able to call the external provider API. If you are protecting your own APIs, you can additionally validate token claims at your resource server. However, for Scalekit-hosted integrations, scope enforcement is already embedded in the execution layer.

Step 4: Revocation and Tenant Isolation at the Grant Level

Revocation in Scalekit operates at the grant level rather than the credential level. When a user withdraws consent or leaves an organization, the corresponding connection is invalidated without affecting other users or tenants.

Each delegated grant is tied to:

- User identity

- Tenant

- Application

- Approved scopes

Because tokens are short-lived and refresh tokens are centrally managed, exposure windows are limited. Tenant isolation is preserved through explicit identity claims rather than implicit trust boundaries.

This directly resolves the revocation and blast-radius failures associated with API keys.

Where Scalekit Fits in the Authorization Architecture

Scalekit acts as the Authorization Server and integration execution layer. The AI agent functions as an OAuth client. External SaaS APIs or your internal services function as resource servers.

Responsibilities are cleanly separated:

- Scalekit manages OAuth flows, grant storage, and token lifecycle

- The AI agent requests delegated authority

- Resource APIs enforce scope or rely on Scalekit-managed execution

This separation ensures that the identity policy remains centralized while the AI logic remains independent.

Why This Model Scales as AI Systems Evolve

AI agents rarely stay static; they begin with a narrow capability, such as reading support tickets, and gradually expand to updating records, triggering workflows, and orchestrating across multiple SaaS systems. As capabilities expand, the identity surface expands with them.

In an API key model, adding capability typically means expanding the privileges attached to a shared credential. The trust boundary widens implicitly. The same key now authorizes more actions across more systems, increasing blast radius and operational risk.

With Scalekit, expansion happens through explicit scope modeling and connection-level delegation. When an AI agent gains a new capability, you define a new scope, enable the corresponding integration action, and require user authorization for that additional access. Existing grants remain constrained. Authority is granted through policy configuration within ScaleKit rather than through broader shared credentials.

This approach allows AI systems to scale integration depth and surface area without increasing systemic risk. Capabilities evolve. Trust boundaries remain explicit. Delegation remains revocable and tenant-isolated.

Conclusion

In early-stage AI integrations, API keys feel convenient because they eliminate complexity. But convenience does not scale. When AI agents act on behalf of users across tenant boundaries, static credentials become structural liabilities. They cannot express intent, limit permissions, revoke access selectively, or support traceable audit trails. These limitations directly impact enterprise readiness and governance.

OAuth solves these problems by introducing delegated authorization — where user identity, consent, and permissions are explicit and verifiable. When applied to AI agents, OAuth models authority in a way that matches real security and compliance needs.

Scalekit operationalizes this model by centralizing scope boundaries, managing token lifecycles, and enforcing runtime delegation. By mapping real domain actions to scopes, persisting per-user grants, and isolating authority at the tenant level, Scalekit ensures that AI agents can safely scale from simple automations to deeply embedded business logic.

For production systems that depend on autonomous AI agents, delegated authorization is not optional; it is foundational. OAuth is not merely a protocol upgrade over API keys; it is an architectural necessity.

FAQs

What is the core difference in OAuth vs API keys for AI agents?

The core difference is delegation. API keys authenticate an application, while OAuth authorizes actions on behalf of a user with scoped and revocable permissions. AI agents frequently act on behalf of users in multi-tenant SaaS environments, making delegated authorization essential for production systems.

Why do API keys work in demos but fail in production AI systems?

API keys simplify integration by removing consent flows and token exchange. In demos, this is acceptable because identity boundaries are often flat. In production, AI agents must enforce per-user permissions, scoped access, revocation controls, and audit trails — capabilities that API keys do not provide.

What are the main API key security risks for AI applications?

The main API key security risks include:

- No scoped permissions

- No per-user delegation

- No granular revocation

- High blast radius if leaked

- Limited auditability

As AI agents gain autonomy and integration depth, these risks increase significantly.

How does OAuth enable scoped consent for AI SaaS?

OAuth issues access tokens containing explicit scopes such as contacts.read or tickets.update. APIs validate these scopes before executing requests. This ensures that AI agents operate within explicitly authorized capability boundaries rather than unrestricted access.

When should AI systems use Authorization Code flow instead of Client Credentials?

Authorization Code flow should be used when the AI agent acts on behalf of a user. It preserves identity, consent, and scope delegation. Client Credentials flow is suitable only for backend automation where no user context exists.

How does OAuth improve auditability for AI agent actions?

OAuth tokens include identity claims such as user ID, tenant ID, and scope. Each request can be logged with structured attribution. This enables enterprises to trace actions back to specific users and approved permissions, supporting compliance and incident response requirements.

Can API keys ever be appropriate for AI integrations?

API keys may be appropriate for single-tenant internal automation where user delegation is not required. However, for multi-tenant AI SaaS systems that modify customer data, OAuth is the recommended architecture.

How does OAuth reduce blast radius compared to API keys?

OAuth tokens are scoped, short-lived, and revocable per user or tenant. If compromised, their impact is limited by scope and expiration. API keys typically provide broad, long-lived access, increasing systemic exposure if leaked.

How does Scalekit help implement OAuth for AI agents?

Scalekit centralizes delegated authorization by:

- Defining application-level scopes

- Persisting per-user connections (grants)

- Managing short-lived token lifecycles

- Enforcing scope validation before integration execution

- Supporting tenant-isolated revocation

This allows AI agents to operate without holding static provider API keys.

What happens when an AI agent gains new capabilities over time?

With API keys, expanding capability often means expanding shared access privileges. With OAuth and Scalekit, new capabilities are modeled as additional scopes requiring explicit authorization. Authority expands through policy configuration rather than widening trust boundaries.