How to Build a Deal Intelligence Agent with Gong, Attio, and Slack

TL;DR

- Deals slip not because the data is missing, but because nobody consistently connects Gong and Attio into a daily brief; this agent closes that gap automatically every morning.

- It pulls yesterday's Gong calls, analyzes each transcript for sentiment, objections, and competitor mentions, then cross-references the results against Attio for deal stage, value, and close date.

- A weighted risk formula scores every deal from 0.0 to 1.0 and posts a prioritized brief to Slack before your first standup, with no manual effort after the first setup.

- The full code is on GitHub. Clone the repo, configure your connectors, and have it running in under 30 minutes.

Sales teams don't lose deals because the data isn't there; they lose them because by the time someone connects the data, it's already too late to act. Gong has the full call recording for every conversation, and Attio has the complete deal history, including stage, value, and close date. However, performing a root cause analysis across both datasets requires correlating call transcripts with CRM records manually to identify where sentiment shifted, when engagement dropped, or which objection went unaddressed. That analysis takes 40 to 45 minutes of manual effort every morning, and because it's never prioritized consistently, the signals that could have saved the deal go unnoticed until they surface on the forecast call.

This tutorial walks you through building an agent that does it automatically: pulls yesterday's Gong calls, analyzes transcripts for risk signals, cross-references Attio for deal context, and posts a prioritized brief to Slack before your next standup.

What Is a Deal Intelligence Agent and Why Does Every Sales Team Need One?

A deal intelligence agent is an automated system that monitors your sales pipeline for risk signals, pulling data from call recordings, cross-referencing your CRM, and surfacing the deals most likely to slip before anyone has to ask. Instead of a sales leader manually reviewing Gong recordings and Attio records every morning, the agent does it automatically and delivers a prioritized brief to Slack before the first standup.

The reason every sales team needs one comes down to a single visibility problem: the signals that predict whether a deal will close or slip are already in your tools, they just never make it into one place in time to act on them. Gong has every call recorded and transcribed. Attio tracks every deal stage, close date, and activity log. But correlating those two sources to identify which deals are trending toward loss means 45 minutes of manual work every morning that consistently gets deprioritized, and by the time a slipping deal gets noticed, the window to save it has already closed.

The result is always the same: negative sentiment builds across three consecutive calls with no flag raised, a competitor gets mentioned twice in a week, and nobody notices, and a close date creeps up with zero CRM activity until it finally surfaces on the forecast call, at which point it's already too late to act. That's the gap this agent closes, and here's exactly how it works.

How This Deal Intelligence Agent Works: A Deterministic Pipeline

Although this is called an agent, it's worth being precise: it's not an autonomous reasoning loop that decides what to do next. It's a deterministic, sequential pipeline triggered by a scheduler that runs the same fixed steps every morning and exits cleanly:

Auth check: verifies all three connectors are active before touching any data. If a connector has been revoked or expired, it generates a magic link on the spot rather than failing silently mid-run.

Call fetch: pulls yesterday's calls from Gong using full ISO 8601 datetime parameters. If no calls exist for that window, the agent exits cleanly without proceeding.

Transcript analysis: fetches the full transcript for each call and extracts sentiment, engagement level, competitor mentions, and objections. If a transcript isn't ready yet, Gong takes 10–15 minutes to process after a call ends, and the call is skipped without blocking the rest of the queue.

CRM cross-reference: matches each call to an Attio deal using the prospect's email first, then the company name prefix as a fallback. If no deal is found, the call still appears in the report under the "unknown deal" metadata.

Risk scoring and Slack post: a weighted formula combines sentiment, days to close, engagement, and objections into a 0.0–1.0 score. Deals are ranked, and the top results are posted to Slack as a structured brief.

No persistent state between runs, no replanning, no surprises. When something goes wrong, you know exactly which step failed, and when it succeeds, you know exactly what data produced the output, which matters a lot for a tool that a sales team relies on every morning.

What Does the Sales Team Get Once This Agent Is Deployed?

By the time a sales leader opens Slack in the morning, the review work is already done, no manual correlation, no calls skipped because someone was busy, no surprises surfacing for the first time on the forecast call.

Every morning's brief gives the team:

- Ranked risk visibility: deals sorted by risk score with sentiment, engagement, competitor mentions, and objections all in one place

- CRM context alongside call signals: deal stage, value, and close date from Attio, sitting next to the transcript analysis from Gong

- Actual next steps: pulled from what was discussed on the call, not inferred after the fact

- Consistent analysis: the same logic applied to every call every day, regardless of who had time to review that morning

The most at-risk deals are at the top, and everything a sales leader needs to walk into a standup prepared is already waiting in Slack. Here's how the agent coordinates across all three systems to make that happen.

With that architecture clear, let's configure the connectors and get the pipeline running.

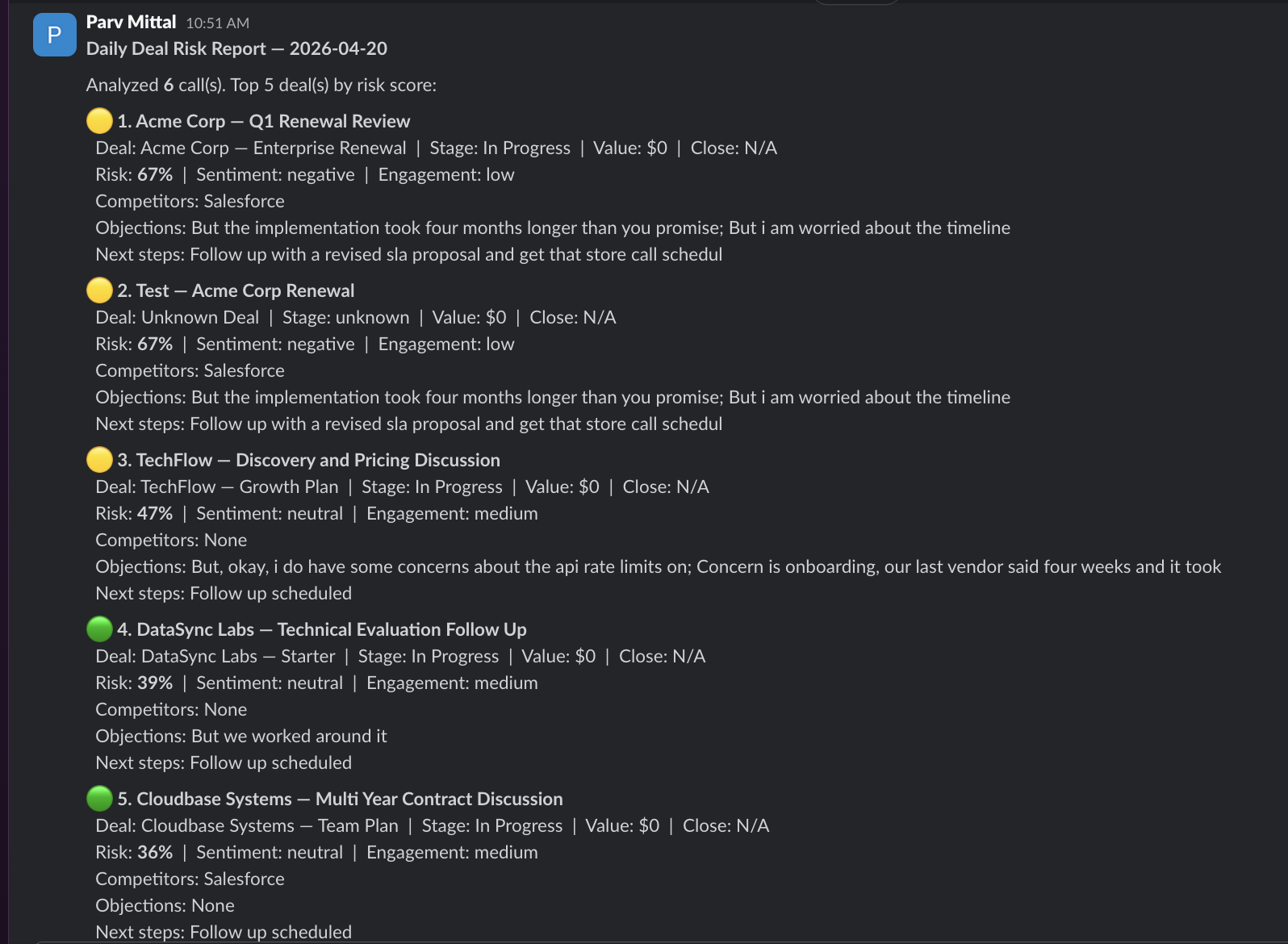

What Does Your Team Actually Receive in Slack Every Morning?

The output of each morning's run is delivered as a structured Slack message in the configured channel, which looks like this.

This message is designed so that a sales leader can immediately understand which deals need attention, without opening Gong or Attio. Each section is generated from real data in the pipeline:

- The Risk tier is calculated using a weighted formula that combines sentiment, days to close, engagement, and objections.

- Deal metadata, including name, stage, value, and close date, is pulled from Attio.

- Sentiment and engagement are extracted from the actual call transcript.

- Competitors and objections are detected from the transcript content.

- Next steps are surfaced from what was actually discussed on the call.

The same information is available in the terminal output during each run, so you can verify what was analyzed before it reaches the channel. This output layer is what makes the agent immediately useful. It bridges the call recording system and the CRM into a single morning brief that fits how sales teams already operate.

What Are the Prerequisites Before You Deploy This Agent?

- A Scalekit account (free tier works) with a new environment created for this project

- A Gong workspace with call recording enabled — at least one user must have Telephony calls imported turned on under Company Settings → Users → Data Capture

- An Attio workspace with deals and contacts that follow the naming convention covered in the matching section below

- A Slack workspace where you're a member of the channel you want reports posted to

- An OpenRouter API key (optional; the agent falls back to rule-based analysis automatically if none is set)

- Python 3.11 or newer is installed locally

Clone the repo and install dependencies. The entire agent lives in a single file called run_flow.py:

Then create a .env file in the project directory:

One thing to get right before moving on: GONG_CONNECTOR and SLACK_CONNECTOR must match the connector names in your Scalekit dashboard exactly, including any suffix added during setup. The connector name is passed as connection_name on every execute_tool() call; a mismatch either routes to the wrong connection or returns a not-found error.

That's everything you need: clone, configure the .env, and the pipeline is ready to run. The next step is connecting the three services through Scalekit so the agent can actually talk to them.

Why Auth Is the Hardest Part of Multi-Service Automation

Before any pipeline logic runs, all three services need to be authenticated and ready. In a typical setup, that means three separate implementations, each with its own quirks:

- Gong: workspace-level OAuth with tokens that expire and must be refreshed, with a base URL and token format specific to Gong's implementation

- Attio: supports both API keys and OAuth, depending on the operation; connector-based access via Scalekit uses OAuth, which requires its own authorization flow

- Slack: bot token OAuth with scope-based permissions that must be configured in the Slack App dashboard before the flow runs; missing scopes return errors that look like access issues rather than configuration problems

Managing all three independently means writing token storage, refresh logic, and error handling per service before a single line of pipeline logic can be tested. This is where most multi-service automation projects stall.

Scalekit removes that entirely. You configure each connector once in the dashboard and interact with all three through a single interface:

- Configure once, run forever: each connector goes through its authorization flow exactly once, and every subsequent run starts in ACTIVE status immediately

- One call pattern for everything: every API call to every service goes through the same execute_tool() method, with only the connector name and tool name changing between calls

- Zero token management: token expiry, refresh cycles, and connection state are all handled by Scalekit automatically, with no mid-run failures from a credential silently expiring

With that in place, here's how to configure all three connectors in Scalekit.

How to Set Up Your Connectors in Scalekit

Set up all three connectors before writing any code. The agent checks the connector status at startup, and having all three active means you can test the full pipeline from the very first execution without interruption.

Step 1: Create Your Scalekit Account

Go to Scalekit, create a free account, and create a new environment for this project. Copy the SCALEKIT_ENV_URL, SCALEKIT_CLIENT_ID, and SCALEKIT_CLIENT_SECRET from the environment dashboard into your .env file.

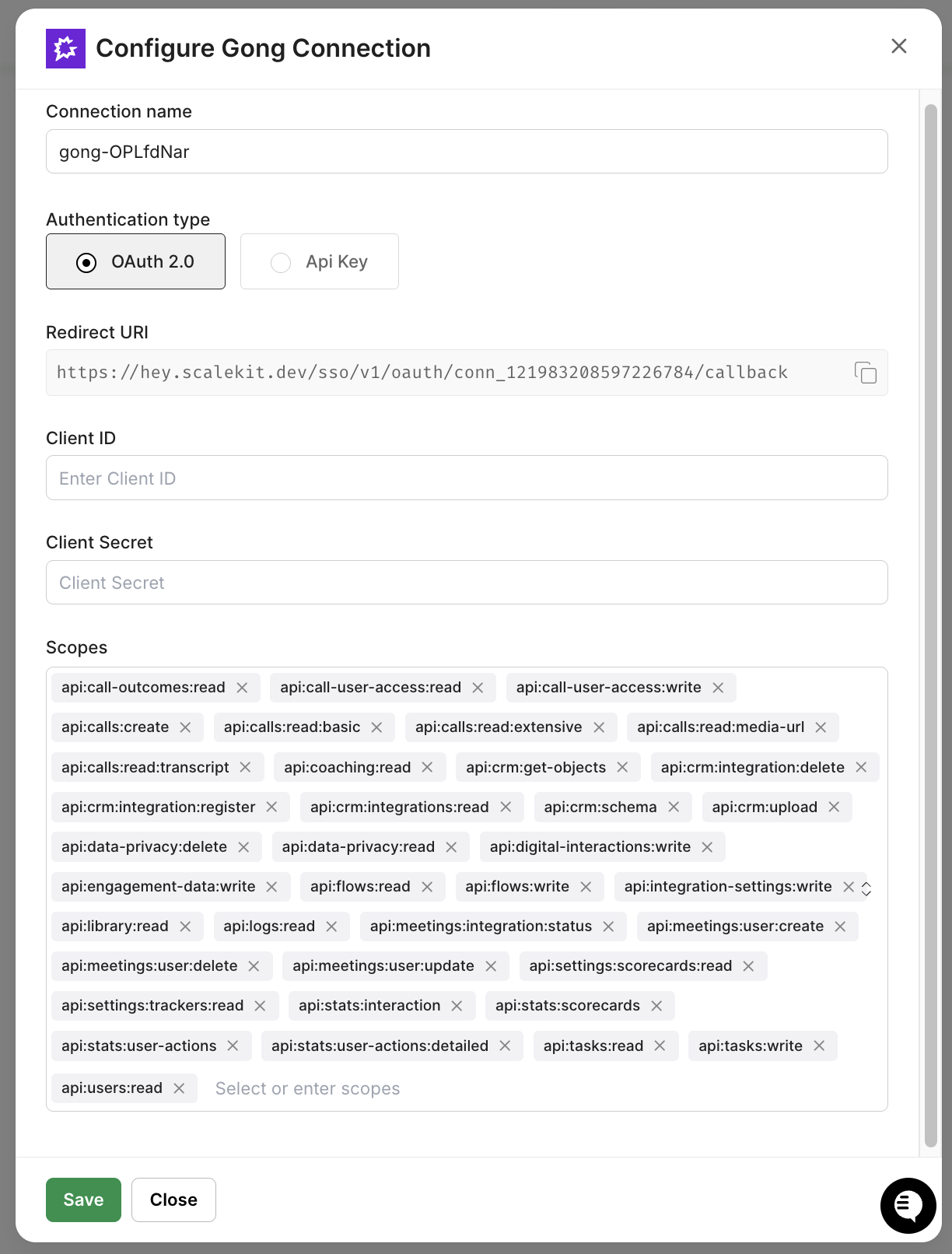

Step 2: Add the Gong Connector

Navigate to Agent Kit → Connections and add a new connection for Gong. Before authorizing, make sure your Gong workspace admin account has the following scopes enabled: api:calls:read, api:calls:transcript:read, and api:users:read. These are required for the agent to pull calls, transcripts, and attendee data.

Authorize using your Gong workspace admin account since workspace-level OAuth scope is needed to pull calls across all users, not just your own. After setup, note the exact connection name; it may include a short suffix like gong-abc12345. Set this as GONG_CONNECTOR in your .env.

Step 3: Add the Attio Connector

Add the Attio connection using your Attio workspace credentials. Attio's OAuth flow requires record:read scope on the deals and people objects. Make sure your workspace role has read access to both before authorizing.

The connection name defaults to attio unless you rename it; the agent uses the literal string "attio" in the code, so keep the default or update the code to match.

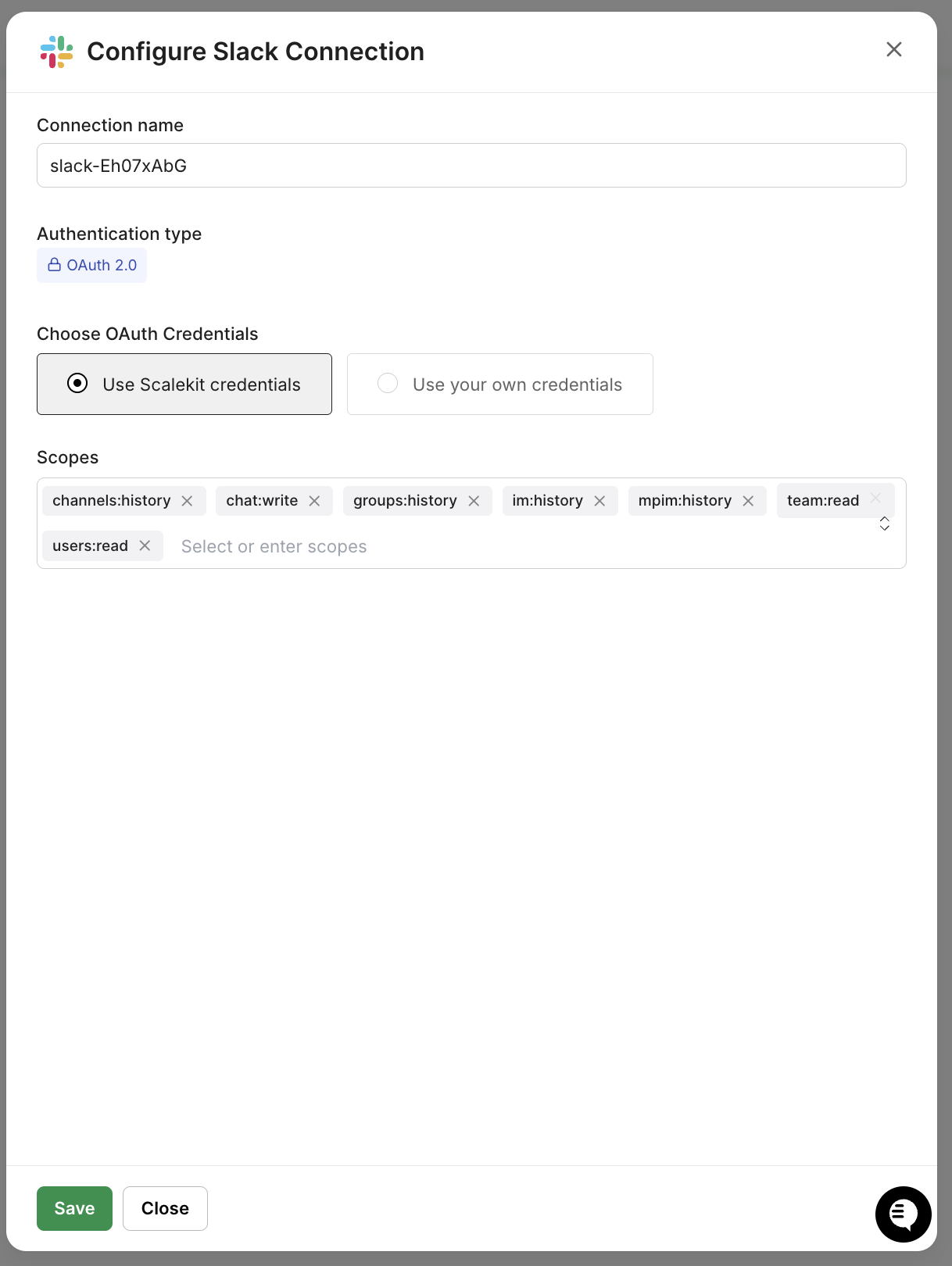

Step 4: Add the Slack Connector

Add Slack and authorize the account that will post the daily report. The OAuth flow requires the following bot scopes: chat:write, chat:write.public, and channels:read. Configure these in your Slack App dashboard before running the OAuth flow; the post will silently fail or return unhelpful permission errors.

Make sure the authorized account is already a member of the channel where you want reports posted. After OAuth, get the channel ID by right-clicking the channel in Slack, copying the link, and using the last path segment. Set this as SLACK_DM_USER in your .env.

Setting Up Auth with Claude Code

Now that the connectors are configured, you have two ways to get the pipeline code: clone the repo directly and use the code as-is, or use Claude Code to generate it from scratch with the Scalekit plugin handling the auth scaffolding automatically. Either way, the three foundational pieces below are what the entire pipeline depends on.

If you're using Claude Code, install the Scalekit plugin and run this prompt:

Then give Claude Code this prompt:

"Build a deal intelligence agent: fetch yesterday's Gong calls and transcripts, match each call to an Attio deal, score risk by sentiment + days to close + engagement + objections, and post a ranked report to a Slack channel using Scalekit Agent Auth."

Claude Code produces run_flow.py, which includes the full pipeline. The three foundational pieces it generates are the client setup, the tool() helper, and the auth startup check. Each one is worth understanding before you read the pipeline steps.

The Scalekit Client and Connector Map

The client initialization connects to your Scalekit environment. The CONNECTOR_USERS map specifies the identity to act on behalf of when calling each service. Every execute_tool() call downstream uses this map to route the request to the correct connected account.

The tool() Helper

The tool() function is the single interface for every API call in the pipeline. It wraps execute_tool() with the connector name, user identity, and connection_name parameters so that every service interaction follows the same pattern and routes to the exact right Scalekit connection.

A Gong call looks like tool(GONG_CONNECTOR, "gong_calls_list", from_date_time=from_dt, to_date_time=to_dt). An Attio lookup looks like tool("attio", "attio_list_records", object="deals", limit=50). For Gong and Attio, the tool() wrapper is used throughout. The Slack post in Step 4 calls connect.execute_tool() directly so the response object (including the message timestamp) is available after posting.

The ensure_authorized() Startup Check

The ensure_authorized() function runs once at startup for each connector and confirms it is in ACTIVE status. If a connector needs authorization, it generates a magic link on the spot so you can complete OAuth without going back to the dashboard.

On the first run, this function pauses for any connector that needs authorization. On every subsequent run, all three connectors print ACTIVE, and the pipeline proceeds immediately with no interaction required and auth handled. With that in place, here's what executes every morning.

The Five-Step Agent Pipeline

Each step is scoped, and independent of a failure in the Attio lookup for one call doesn't prevent the rest from being analyzed, and the Slack post always runs last, regardless of what happened upstream.

Step 1: Auth Check

The agent validates all three connectors before doing any real work. This prevents wasted API calls if a token has been revoked, a situation that can happen when a user changes their password or revokes access in the third-party service's settings.

get_or_create_connected_account() is idempotent. On the first call, it creates the account record in Scalekit. On every subsequent call, it returns the existing record with its current status. No API calls to Gong, Attio, or Slack are made at this step.

Step 2: Fetching Yesterday's Calls from Gong

The agent queries Gong for all calls in the previous 24-hour window. One critical detail: gong_calls_list requires full ISO 8601 datetime strings — passing a date-only string like 2024-01-15 silently returns no results rather than an error, making it look like no calls exist for that day.

For each call in the list, the agent fetches the full transcript using the call ID:

If the transcript is shorter than 30 characters, the call is skipped. Gong takes 10–15 minutes to finish transcribing after a call ends, so this guard prevents the agent from analyzing an empty or partial transcript.

Step 3: Analyzing Each Transcript

With the transcript text in hand, the agent extracts four structured signals: sentiment, engagement level, competitor mentions, and objections. With OPENROUTER_API_KEY set, an LLM performs the analysis at temperature 0 to ensure consistent, deterministic output across runs. Without it, the same transcript can yield different sentiment scores on different days:

If no key is configured or the LLM call fails, the agent falls back automatically to a rule-based analyzer that costs nothing and requires no configuration:

The rule-based analyzer uses signal word counting, regex pattern matching for objection phrases, and question-mark frequency as an engagement proxy:

Step 4: Cross-Referencing with Attio

Sentiment signals only tell part of the story — a 67% risk score on a $12,000 discovery call reads very differently from a 67% risk score on an $84,000 renewal closing in four days. The deal stage, close date, and value come from Attio and are what make the score actionable.

The agent pre-fetches all deals once and matches locally — more efficiently than a per-call API lookup and more reliably than Attio's text-search endpoint, which does fuzzy matching across all fields regardless of the query string:

Matching uses two strategies: email first, and company name prefix as a fallback. Gong doesn't always return external-party emails for telephony calls, so the fallback ensures the agent still finds the right deal when email data is unavailable. The call title format "Company Name -- Call Type" is the only naming convention required for this to work:

If no deal is found, a first call with a new prospect who has no Attio record yet still appears in the report under "Unknown Deal" metadata. The signal is worth surfacing even without CRM context.

Step 5: Risk Scoring and Posting to Slack

The risk score translates the qualitative signals from the call analysis into a single 0.0–1.0 number, making it easy to sort and prioritize deals without reading through each call summary.

Sentiment carries 40% because a prospect who is actively frustrated or pushing back is the strongest available signal of intent engagement; objections amplify or dampen that reading, and days to close add urgency. After scoring, deals are sorted, and the top five are posted to Slack:

Note that connection_name=SLACK_CONNECTOR is passed explicitly on this call. Without it, Scalekit routes to any active Slack connection associated with the identifier, which may be a different workspace than intended if multiple Slack accounts are authorized.

How Do You Run and Schedule This Agent in Production?

With connectors active and the .env file configured, start the agent:

The agent prints a live status update at each stage. Here is what a typical five-call run looks like:

For continuous daily operation, schedule the agent using cron on macOS or Linux:

Or deploy it as a GitHub Actions scheduled workflow:

Store your .env values as GitHub Actions secrets. The OAuth tokens stay in Scalekit's encrypted token store — only the Scalekit credentials and user identifiers are passed into the runner environment.

The pipeline runs cleanly in development, but a few configuration details and edge cases are worth locking down before you hand it off to a cron job. Here's what to check.

What Are the Common Failure Points to Address Before Production Deployment?

Most first-run failures aren't logic errors; they're small setup mismatches that produce confusing output. Here's what to verify before scheduling the agent for daily use:

- Transcripts not ready: Gong takes 10–15 minutes to transcribe after a call ends. The agent skips calls with transcripts shorter than 30 characters, so late-evening calls won't break the run; they just won't appear that morning. Re-run manually later today to pick them up.

- LLM returning bad output: Every LLM call has automatic fallback to the rule-based analyzer. Before relying on LLM analysis in production, test your chosen model against real transcripts. Smaller free models produce inconsistent results on nuanced calls. Switching to gpt-4o-mini on OpenRouter significantly improves accuracy.

- Attio missing deals: The agent fetches 50 deals per run by default. If your workspace has more, increase the limit parameter or add pagination. Also, make sure deal names in Attio consistently match the first segment of your Gong call titles — "Acme" vs "Acme Corp" will cause missed matches.

- Gong connector name mismatch: The connector name in Scalekit may include a suffix like gong-wkAzsGmi. Set GONG_CONNECTOR to the exact string in your dashboard. Also, verify that at least one user has Telephony calls enabled under Company Settings → Users → Data Capture.

- Attio email filter format: Always use filter={"email_addresses": "user@example.com"}, the flat string format. Nested formats return a constraint error. Never use attio_search_records for email lookups; it does fuzzy matching and returns unreliable results.

- Slack channel access: The authorized Slack account must be a member of the channel in SLACK_DM_USER; you'll get channel_not_found even with the correct ID. Always pass connection_name=SLACK_CONNECTOR explicitly on the execute_tool() call.

- Gong datetime format: Pass full ISO 8601 strings: "2024-01-15T07:00:00Z". A date-only string silently returns empty results with no error.

- Rate limits: Gong allows 3 requests per second; add time.sleep(0.3) between transcript fetches if you're above 100 calls per day. Attio's 100 requests-per-minute limit is never a concern with the pre-fetch approach. Slack's limit is irrelevant at one message per run.

Conclusion

The data was never the problem; every call is in Gong, every deal is in Attio, and the signals that predict which ones will slip are sitting in both. What was missing was the daily, automated connection between those two sources that surfaces deals trending toward loss before the forecast call starts, and that's exactly what this agent delivers.

Every morning, it runs the same cycle: fetch calls, analyze transcripts, match to CRM, score risk, and post to Slack. A sales leader opens Slack and sees a prioritized brief with the most at-risk deals at the top, complete with deal stage and close date from Attio, specific objections and competitor mentions pulled from the Gong transcript, and next steps sourced from what was actually discussed on the call.

The same architecture extends naturally from here. Pipeline health scores can be written back to Attio for team-wide visibility, risk trends across consecutive calls can trigger escalation notifications, and deal owner routing can deliver personalized briefs to each rep instead of a shared channel. Once the Scalekit connectors are in place and the execute_tool() pattern is established, adding a new signal or action means updating the pipeline logic rather than rebuilding the auth layer underneath it.

FAQ

Why use Scalekit instead of calling each API directly?

Each service has its own auth flow, token format, and refresh schedule. Scalekit collapses all of it into a single execute_tool() call per service and automatically handles token refresh, expiry checking, and connection state. Auth goes from a multi-day implementation problem to a 20-minute configuration step in the dashboard.

Does Scalekit handle token refresh automatically?

Yes. It checks token expiry on every execute_tool() call and refreshes using the stored refresh token when needed. There is no refresh logic in the agent code, and no mid-run failures due to a token expiring during execution.

Can I use this with a different CRM or messaging tool?

Yes. The tool() helper is service-agnostic. If your CRM is HubSpot or Salesforce, replace the Attio calls with the corresponding Scalekit connector tool names. If your team uses Microsoft Teams instead of Slack, replace slack_send_message with the Teams equivalent. The pipeline logic — risk scoring, transcript analysis, and sorting — does not change.

Can I run this for multiple sales teams or regions?

Yes. Each team gets its own .env file with different Scalekit identifiers, different connector names, and a different SLACK_DM_USER channel. Run a separate instance per team. Scalekit manages each set of connected accounts independently under the same environment.