API Access Patterns for AI Agents: Identity Architecture for B2B SaaS

TL;DR

- AI agents are shifting SaaS from user-triggered actions to continuous autonomous execution. Traditional OAuth models were designed for short-lived human sessions, not long-running agent workflows operating across tenants.

- API access patterns now determine production reliability. Token expiration, consent revocation, and over-scoped credentials are common failure points in real-world AI deployments.

- Each access model introduces structural tradeoffs. Delegated OAuth improves auditability, service accounts simplify operations but centralize risk, hybrid models enable contextual identity switching, and scoped access reduces blast radius.

- Access architecture must follow workload sensitivity. Enterprise compliance, tenant isolation, and action-level risk, not implementation convenience should determine identity boundaries.

- Scalekit operationalizes identity as infrastructure. Connected accounts, tenant-bound identifiers, and centralized token lifecycle management enforce secure API access patterns for AI agents in production environments.

AI agents are becoming operational actors inside B2B SaaS platforms. They typically authenticate to an identity layer using service credentials or workload identities, then invoke downstream APIs on behalf of users or organizations. Because these agents execute long-running workflows across multiple systems without continuous human control, the way they access APIs becomes a critical architectural concern.

This article builds on earlier discussions around agent authentication and OAuth architectures. If you are new to this topic, see OAuth vs API Keys for AI Agents, What Is Agent Authentication?, and OAuth for AI Agents: Production Architecture and Practical Implementation Guide. These guides explain how agents authenticate and obtain delegated authority. This article focuses specifically on how API access patterns structure that authority once authentication succeeds.

We examine four access models commonly used in modern AI SaaS systems: delegated OAuth access, service accounts, hybrid identity models, and scoped capability tokens. Each pattern introduces different tradeoffs in auditability, operational complexity, and security risk.

Once an AI agent is authenticated, the next question is how its authority should be represented when interacting with customer APIs.

What Breaks When an AI Agent Starts Acting on Customer APIs?

Consider a B2B SaaS platform deploying an AI agent to automate CRM updates, ticket creation, and billing synchronization across external services. In staging environments, authentication often appears stable because tokens are fresh and execution paths are simple. Production environments introduce different lifecycle behavior.

Access tokens may expire during long-running workflows. Many OAuth providers enforce token rotation, meaning each refresh token invalidates the previous one. When AI agents perform concurrent retries, multiple refresh requests may trigger refresh token reuse detection, a security mechanism defined in OAuth 2.0 Security Best Current Practice. Without a configured concurrency grace period, parallel refresh attempts can invalidate each other and cause intermittent authentication failures. Administrators may revoke application consent while workflows are still executing, and customers often require audit logs showing whether an action was performed as a user or as the platform.

These failures rarely originate from the AI model itself. They emerge from identity and authorization assumptions designed for short-lived human sessions rather than for autonomous systems that execute continuously across tenants.

API access patterns, therefore, become an architectural decision rather than an implementation detail. The way authority is represented determines auditability, tenant isolation, and blast radius in the event of failures.

Why Traditional OAuth Assumptions Fail for Autonomous AI Agents

Traditional OAuth access models were designed for user-driven applications where actions occur within short-lived, human-initiated sessions. A user authenticates, grants consent, performs actions within an active session, and exits. The access token represents that user during a short execution window where identity, intent, and API calls are closely aligned. This model works well for request-response applications.

Autonomous AI agents operate under different constraints. For example, an AI workflow that creates support tickets or updates CRM records based on customer activity may retry failed API calls minutes or hours after the initiating user session has ended. When this happens, the agent must continue operating without an active human session, thereby breaking the assumptions underlying traditional OAuth session models.

- Long-running workflows that continue after the initiating user session ends

- Background retries and scheduling, where no human is present

- Concurrent multi-tenant execution, with different scope boundaries per customer

- Dynamic consent changes, where administrators revoke or modify permissions mid-process

- Operational scale, where thousands of delegated tokens may need lifecycle management

The challenge is not the OAuth protocol itself, but the assumptions around how tokens are used. OAuth access tokens were originally designed for short-lived user sessions where authentication, authorization, and API calls occur within a narrow time window. AI agents operate differently: they execute autonomous workflows that may continue long after the initiating user session has ended.

Modern AI SaaS API design must therefore extend beyond simple user delegation. It must explicitly define how identity is represented, how scope is constrained, and how authority evolves during autonomous execution. Those design decisions lead directly to the access patterns.

The Four API Access Patterns Used by AI Agents

In practice, AI agents interact with external APIs using a small set of recurring authorization models. These models define how the agent represents authority when executing actions on behalf of users or organizations.

The most common API access patterns for AI agents are:

- Direct delegated access: the agent calls APIs using OAuth tokens issued for a specific user.

- Service account access: the agent operates under a system identity rather than a user.

- Hybrid access models: the agent switches between user and service identities depending on the workflow stage.

- Capability tokens: the agent receives narrowly scoped, short-lived tokens for a specific action.

Each pattern solves a different problem in identity architecture. The sections below examine when each model is appropriate and the trade-offs they introduce in terms of auditability, operational complexity, and security risk.

When Direct Delegated Access Using OAuth Makes Sense for AI Agents

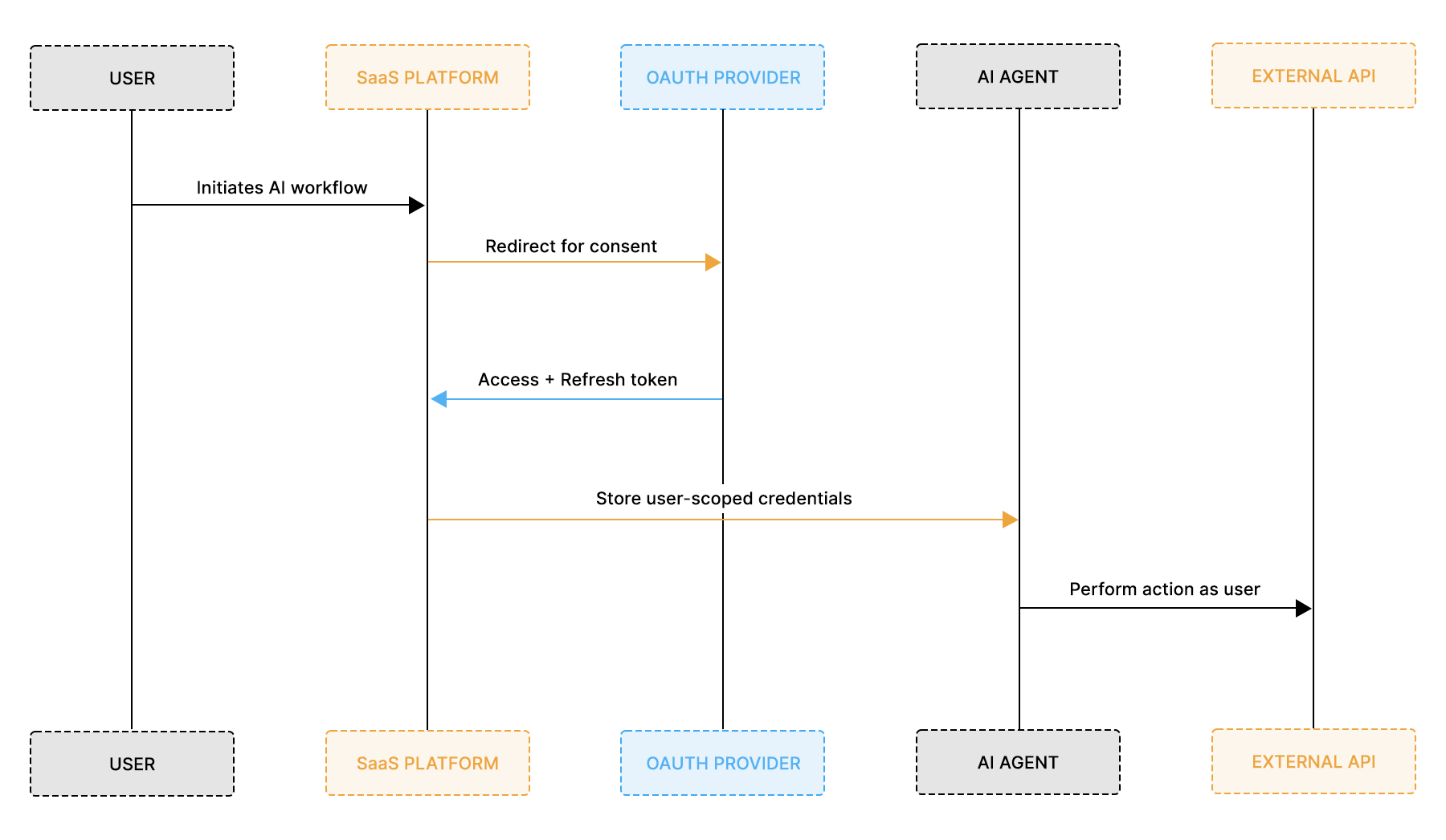

Direct delegated access binds every AI action to a specific end user through the OAuth authorization code flow. The agent stores user-scoped access and refresh tokens, and every downstream API call is executed under that user’s identity. From an audit and compliance standpoint, this model mirrors how traditional SaaS applications operate. For example, an AI sales assistant updating Salesforce opportunities must ensure each modification is attributable to the specific account executive who granted consent. Delegation preserves that direct human-to-action mapping

In this pattern, the flow typically looks like this:

Each action taken by the AI agent can be traced back to a specific user who granted consent. Scope restrictions apply at the user level. Revocation removes the agent’s ability to act on behalf of that individual. For enterprise customers that require strong attribution and least-privilege enforcement, this model aligns naturally with compliance expectations.

However, operational complexity increases as scale grows. A system with 10,000 users may need to manage 10,000 refresh tokens. Long-running workflows must gracefully handle token expiration. Background retries must ensure a valid delegation context exists at execution time. If consent is revoked mid-process, the workflow must fail safely without corrupting the state.

Direct delegated access works best when:

- Actions must be attributable to individual users

- External APIs require explicit per-user consent

- Enterprise auditability is a primary requirement

- Scope boundaries differ significantly between users

This model provides strong attribution and isolation, but it shifts operational burden onto token lifecycle management. As AI agents scale, that burden becomes a central architectural concern rather than a minor implementation detail.

When a Service Account Model Simplifies or Amplifies Risk for AI Agents

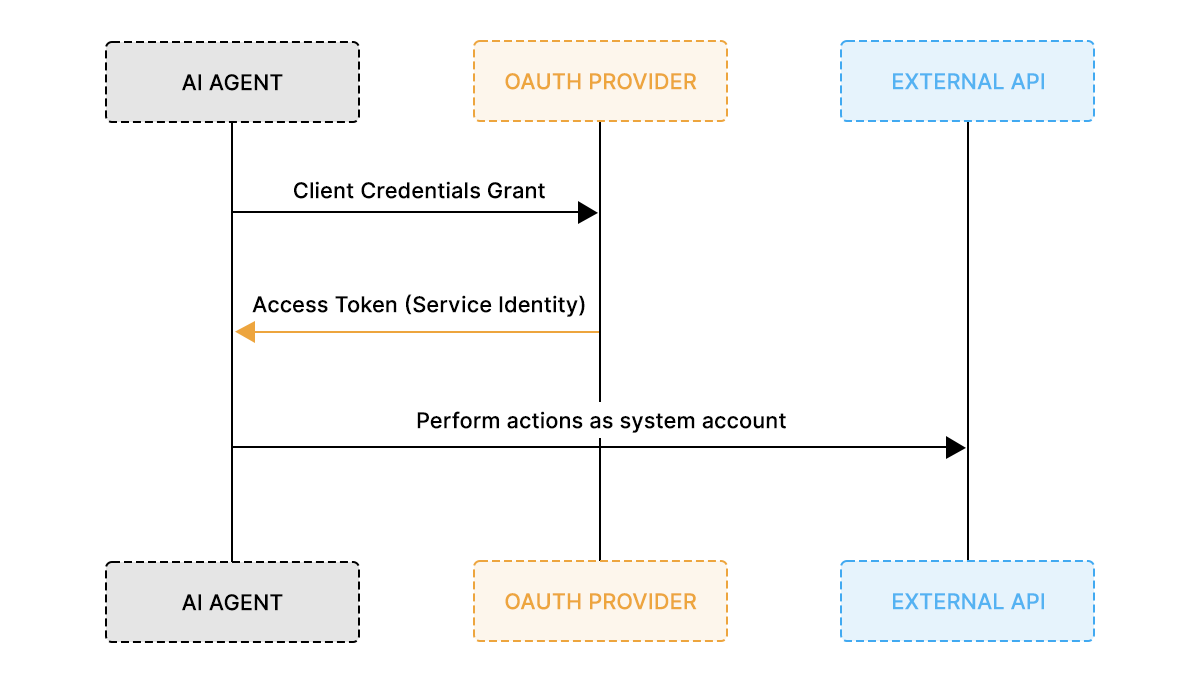

The service account model centralizes authority under a non-human identity. Instead of storing per-user OAuth tokens, the AI agent authenticates using a machine credential and executes actions under a system-level identity rather than an individual user.

In standards-based implementations, this identity is typically issued through the OAuth 2.0 Client Credentials grant (RFC 6749), which produces a scoped, revocable access token for the service. Some platforms instead rely on static API keys, which function as long-lived credentials with limited lifecycle controls. For enterprise B2B SaaS systems, client credentials are generally preferred because they support token expiry, rotation, and revocation, providing stronger lifecycle management and auditability.

In this model, the access flow is structurally simpler:

A nightly billing reconciliation job that synchronizes subscription data across systems, for instance, does not require per-user attribution and can run reliably under a tightly scoped service credential. Background ingestion and analytics pipelines often benefit from this simplified identity boundary.

There are no per-user refresh tokens to manage. Consent flows are simplified. Background jobs can execute without checking whether a user session is still valid. For ingestion pipelines, analytics aggregation, or non-sensitive synchronization tasks, this model often improves reliability.

However, centralization increases blast radius. If the service credential is over-scoped, the agent may have broader access than any individual user. Audit trails become less precise because actions are attributed to a system identity rather than a human principal. Revoking access requires rotating system-level credentials, which may impact all tenants simultaneously.

The service account model is typically appropriate when:

- The agent performs platform-level or background operations

- User-level attribution is not required

- API scopes are stable and tightly constrained

- Operational simplicity is prioritized over granular delegation

While this approach reduces lifecycle complexity, it trades individual attribution for centralized authority. In multi-tenant AI SaaS environments, that trade-off must be carefully evaluated against compliance, isolation, and enterprise trust requirements.

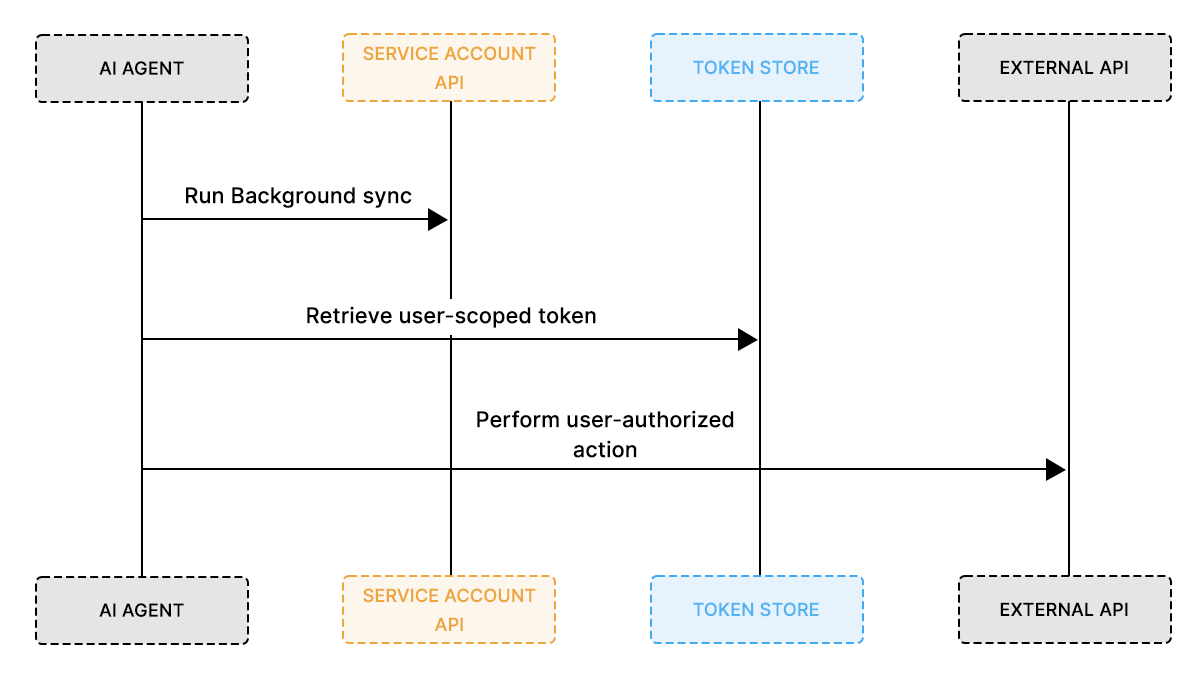

How a Hybrid Access Model Balances Auditability and Operational Scale

A hybrid access model combines user-delegated OAuth with service-level credentials, allowing the AI agent to switch identity context based on the type of action being performed. Instead of committing entirely to per-user delegation or centralized authority, the system explicitly separates background operations from user-bound actions.

In practice, the execution model looks like this:

Under this design, low-risk or system-level operations run under a controlled service identity. Actions that modify user-owned resources execute under delegated user tokens. The agent runtime decides which identity to apply based on the workflow stage, required scope, and audit expectations. An AI DevOps assistant, for example, may analyze pull requests using a service identity but create or modify GitHub issues under the initiating engineer’s delegated access.

This separation reduces token sprawl compared to pure delegation while preserving strong attribution where it matters. It also narrows the blast radius. If a service credential is compromised, only background capabilities are affected. If a user revokes consent, only that user’s delegated actions fail, not the entire system.

Hybrid access models are well-suited for AI agents that:

- Execute multi-stage workflows

- Mix background processing with user-impacting writes

- Operate at enterprise scale

- Require both reliability and audit precision

While more complex to implement, hybrid patterns reflect how autonomous AI systems actually behave in production. Identity becomes contextual rather than static, and API access patterns are defined per action rather than per system.

How Capability Tokens Reduce Blast Radius in AI Systems

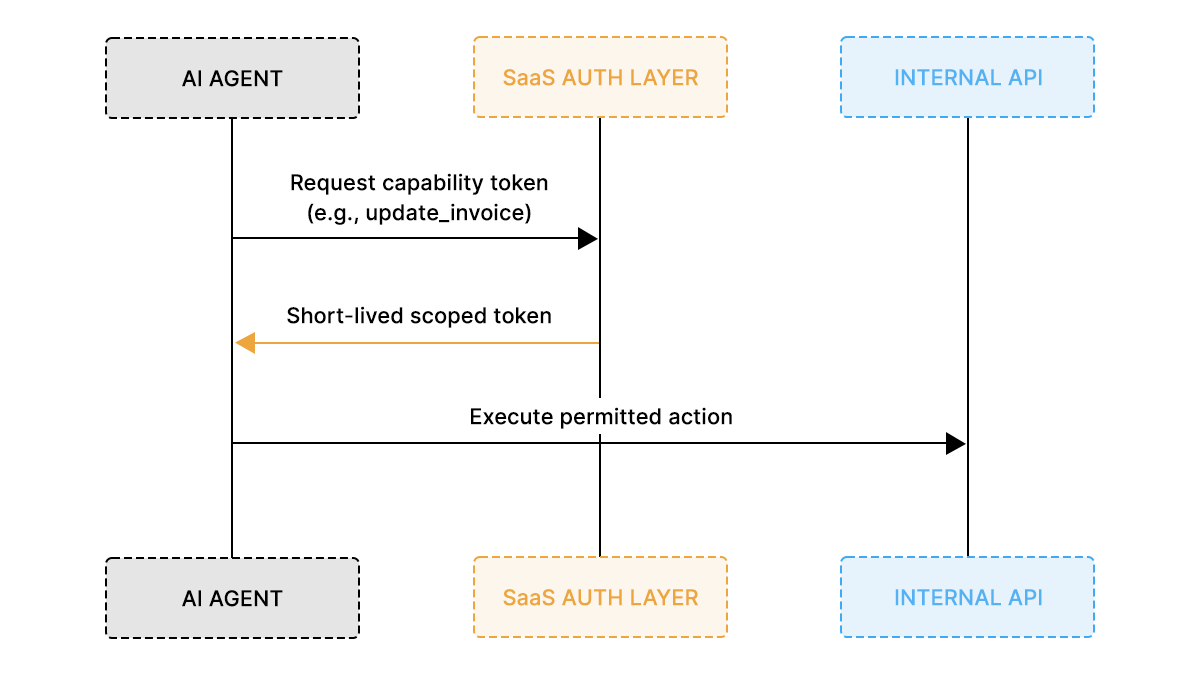

Capability tokens represent action-scoped authorization rather than identity-scoped authorization. Traditional OAuth access tokens usually represent a user or service identity and carry scopes that allow multiple related operations. Capability tokens work differently: they encode permission for a specific action on a particular resource and expire quickly.

Instead of granting an AI agent broad scopes or system-level permissions, the platform issues a short-lived token that authorizes only a single operation. For example, before allowing an AI agent to approve a high-value invoice, a system might issue a token that permits approval of that specific invoice and expires within minutes. If the agent needs to perform another action, it must request a new capability token.

The interaction typically follows an action-scoped authorization flow, where the agent requests a narrowly scoped token before executing a sensitive operation.

Because authority is tied to a specific action rather than a persistent identity, capability tokens significantly reduce blast radius. Even if a token is intercepted or misused, it cannot be reused for unrelated operations or extended workflows.

Capability tokens are particularly effective in:

- Internal microservice architectures

- Tool-based AI agent systems

- High-risk write operations

- Zero-trust environments

- Enterprise SaaS platforms with strict compliance requirements

By limiting authority to the minimum required operation, capability tokens shift API access design from identity-based permission models to action-level authorization, aligning well with modern AI SaaS security architectures. Similar patterns appear in standards such as OAuth 2.0 Token Exchange (RFC 8693) for down-scoped delegation, Macaroons for caveated authorization tokens, and Biscuit tokens, which implement decentralized capability-based authorization.

Which API Access Pattern Creates the Lowest Security Risk?

Security tradeoffs become clearer when evaluated side by side. Each API access pattern for AI agents optimizes for a different balance between attribution, operational complexity, and blast radius. No single model is universally superior; risk depends on workload type, tenant sensitivity, and compliance requirements.

The comparison below highlights the dominant tradeoffs in modern AI SaaS API design:

Delegated access provides strong attribution and tenant isolation but increases lifecycle complexity at scale. Service accounts reduce operational friction but concentrate authority, increasing systemic risk. Hybrid models introduce contextual identity, reducing blast radius while preserving reliability. Capability tokens provide the narrowest authority boundary but require deliberate API design.

The lowest-risk model depends on how narrowly authority is scoped and how effectively revocation and audit are enforced. In practice, mature AI SaaS platforms rarely rely on a single pattern. They intentionally combine models, aligning identity boundaries with workflow stages.

The next step is choosing the appropriate pattern based on workload characteristics rather than convenience.

How B2B SaaS Teams Should Choose the Right Access Model

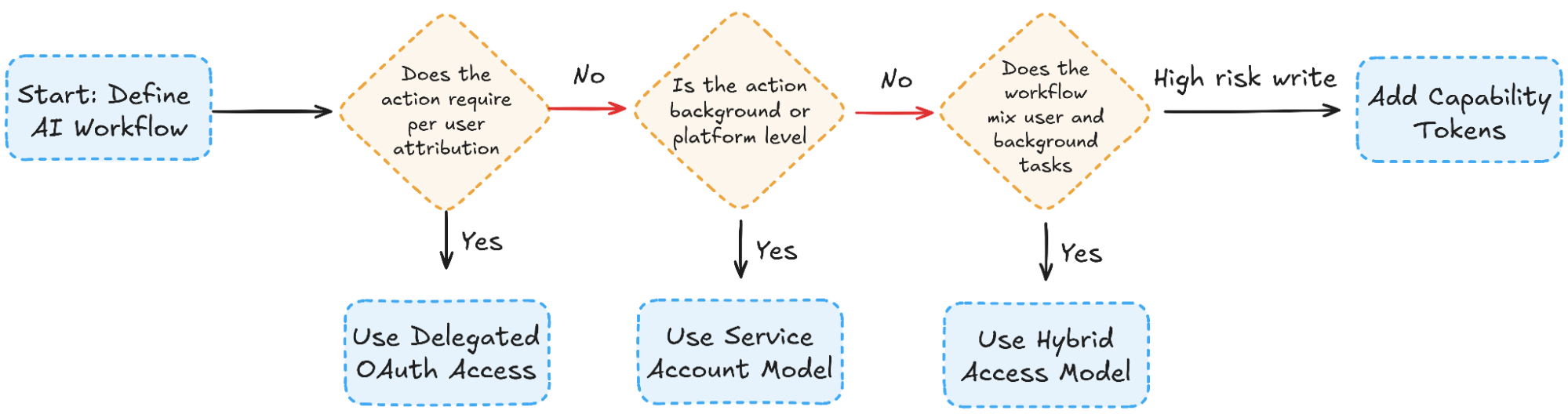

Selecting an API access pattern for AI agents should begin with workload classification rather than implementation convenience. The sensitivity of the action, audit requirements, and tenant isolation needs determine the identity boundary. The decision can be structured as a progressive evaluation instead of a binary choice.

The following flow captures the selection logic used in AI agents:

This structure highlights three architectural filters:

- Attribution requirement: Does the API action need to be attributable to a specific user or organization identity for audit, compliance, or accountability purposes?

- Execution context: Is the workflow background-oriented or user-triggered?

- Risk sensitivity: Does the action modify critical or compliance-sensitive data?

The result is rarely a single pattern across the entire system. A mature SaaS platform may use service accounts for ingestion pipelines, delegated access for user-facing updates, and capability tokens for high-risk financial operations, aligning identity boundaries with workflow stages rather than applying a single global model. AI agents often combine service accounts for ingestion, delegated access for user-facing updates, and capability tokens for high-risk internal writes. The access model becomes contextual rather than global.

When framed this way, the decision process becomes predictable and repeatable across teams. The identity boundary is no longer inherited from legacy OAuth defaults; it is chosen deliberately per workflow stage.

Implementing API Access Patterns for AI Agent Workflow

The DevOps Assistant agent, described in our blog on building a GitHub, Linear, and Slack automation workflow, provides a practical example of how API access patterns operate in production. Instead of manually managing OAuth tokens for each integration, the system uses Scalekit’s Agent Auth to enforce identity boundaries through a unified connector at every workflow step.

The core of this implementation is the ScalekitConnector, which wraps the official ScalekitClient and centralizes:

- OAuth authorization flow generation

- Connected account handling

- Tool execution

- Retry logic and error handling

- Tenant-bound identity resolution

This ensures API access patterns are enforced structurally rather than scattered across business logic.

The snippets below highlight how identity context is selected and enforced at runtime. They focus on the identity boundary rather than the surrounding application logic. The complete DevOps Assistant implementation, including integration wiring and runtime orchestration, is available in the public repository for readers who want to explore the full system end-to-end.

1. Delegated Access: GitHub Acting on Behalf of a User

When a user connects to GitHub, the agent generates an authorization URL:

This represents Direct Delegated Access:

- identifier=user_identifier

- Tokens bound to that user

- OAuth consent required

- Revocation affects only that user

The AI agent does not store raw tokens. It executes GitHub actions through Scalekit:

Here:

- tool_name="github_update_issue"

- identifier=user_identifier

The action is executed under that user’s delegated authority. This aligns directly with the Delegated OAuth pattern described earlier.

2. Service Account Pattern: Slack Workspace-Level Authority

Slack notifications in the DevOps assistant operate differently. These messages do not need per-user attribution. They run under a workspace-level identity.

The same execution method is used:

But the key difference is the identifier.

Instead of a user ID, it may represent:

- A tenant ID

- A workspace identifier

- A shared integration identity

This becomes a Service Account Model:

- Reduced token sprawl

- Centralized authority

- Scope limited to chat:write

- Tenant isolation enforced via identifier binding

The access pattern varies with the identifier context.

3. Hybrid Model: Linear Issue Creation Based on GitHub Labels

The DevOps assistant dynamically links GitHub PRs to Linear issues:

When creating or updating Linear tickets, execution still flows through:

In other cases the same operation may execute under a tenant-level integration identity:

This illustrates the hybrid access pattern, where the identity used for API execution changes depending on the workflow context:

- GitHub: delegated user identity

- Linear: user-bound OR tenant-bound identity

- Slack: service identity

The agent determines the correct identity context for each step in the workflow, while the execution layer handles token resolution and authorization.

4. Identity as Infrastructure: Centralized Execution Layer

All external calls route through one method:

This abstraction provides:

- Retry handling

- Backoff logic

- Scoped authority

- Token refresh managed by Scalekit

- Revocation-aware execution

Instead of embedding OAuth logic across integrations, the agent delegates identity enforcement to Scalekit.

The key architectural shift is subtle but critical. The AI agent no longer manages raw tokens or refreshes logic directly. Instead, it selects the appropriate identity context for each workflow step, while Scalekit enforces scope boundaries, tenant isolation, and token lifecycle management at the infrastructure layer.

Conclusion: Choosing the Right API Access Pattern for AI Agents

API access patterns determine how AI agents represent authority when interacting with external systems. In this article, we examined four common models used in AI SaaS platforms: delegated OAuth access, service accounts, hybrid identity models, and capability tokens. Each pattern addresses a different balance between auditability, operational simplicity, and security risk.

Delegated OAuth provides strong user attribution but introduces token lifecycle complexity. Service accounts simplify background execution but concentrate authority under a system identity. Hybrid models enable contextual switching between identities, while capability tokens reduce blast radius by limiting permissions to a specific action.

In practice, mature AI SaaS platforms rarely rely on a single authorization model. Instead, they combine these patterns across different workflow stages, aligning identity boundaries with the sensitivity of the operation. Designing these boundaries carefully is what ultimately determines whether AI agents remain secure, auditable, and reliable in production systems.

Frequently Asked Questions

1. What are API access patterns for AI agents?

API access patterns define how autonomous AI systems authenticate and authorize actions when interacting with internal and external APIs. They determine identity representation, scope enforcement, token lifecycle handling, and audit traceability in multi-tenant environments.

2. Why do AI agents require different OAuth considerations than web applications?

Traditional OAuth assumes short-lived, user-driven sessions. AI agents execute background tasks, retry operations, and span multiple services without continuous human interaction. This breaks assumptions around token timing, revocation, and authority boundaries.

3. What is the main risk of using a service account model for AI agents?

The primary risk is the centralized blast radius. If a service credential is over-scoped or compromised, it can affect all tenants or workflows that rely on that identity. Audit attribution also becomes less granular compared to delegated user models.

4. When should delegated OAuth be preferred?

Delegated OAuth is appropriate when actions must be attributable to individual users, especially in enterprise environments requiring strict audit trails and user-level consent boundaries.

5. What is a hybrid access model in AI SaaS design?

A hybrid model combines delegated user identities and service-level credentials. The agent selects identity context per workflow stage, allowing background tasks to use system authority while user-impacting actions remain attributable.

6. How do scoped or least-privilege models reduce risk?

By limiting authority to narrowly defined scopes, systems prevent token reuse across unrelated actions. This reduces blast radius and improves compliance alignment with least-privilege principles.

7. How does Scalekit support delegated access?

Scalekit uses connected accounts bound to user identifiers. OAuth flows are orchestrated centrally, and token storage, refresh, and revocation are handled automatically within the identity layer.

8. How does Scalekit support service or tenant-level access?

Connected accounts can be bound to organization identifiers rather than to users. This enables workspace-level integrations while preserving tenant isolation and scoped authority.

9. How does Scalekit handle token refresh and revocation?

Scalekit manages the token lifecycle internally. Access tokens are refreshed before expiry, and the connected account status reflects revocation or invalidation events. Agents execute tools without manually handling refresh logic.

10. Does Scalekit replace OAuth?

No. Scalekit builds on OAuth and related identity standards. It abstracts orchestration, storage, and enforcement layers so developers can implement secure API access patterns without embedding token logic across application code.