Why Frontegg Starts to Feel Constraining for B2B AI Apps

Frontegg didn't become a popular B2B SaaS auth platform by accident. It was built org-native from the start: a first-class Account/Tenant object, org context embedded in tokens, per-tenant SSO and SCIM, a polished self-service admin portal, and a low-code Flows builder that lets non-engineers configure auth behavior without filing tickets. For teams that need a complete, fast-to-ship identity layer for a multi-tenant product, it delivers.

What has changed is the shape of the product that identity needs to cover.

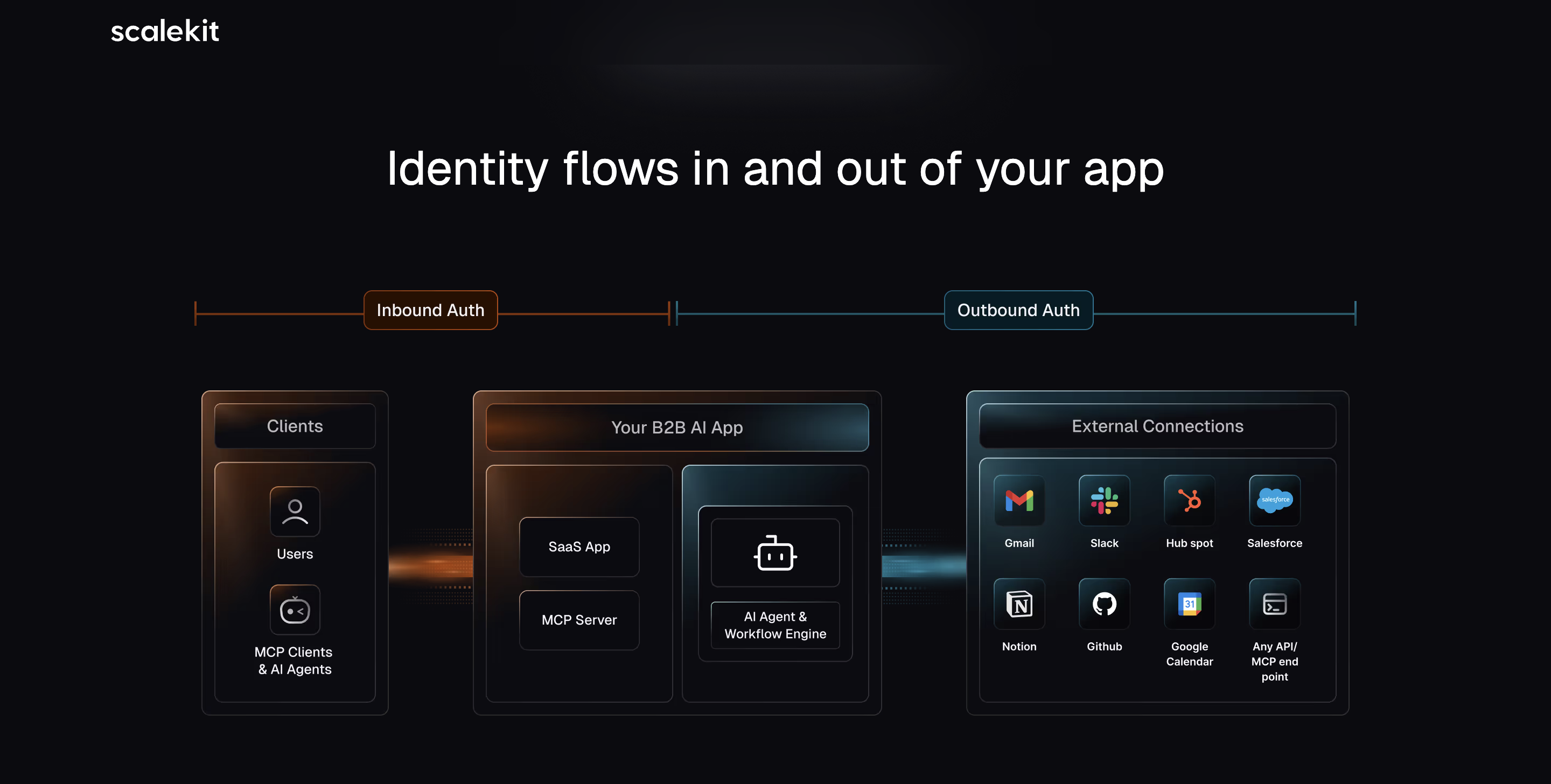

B2B apps in 2026 increasingly ship AI surfaces alongside the user-facing product: agents that act on behalf of users, MCP servers that expose product functionality to external AI clients, background workflows that execute without a human in the loop. When those surfaces appear, the evaluation shifts. The question is no longer just whether your identity layer handles users and orgs well, it's whether it handles agents, MCP clients, and outbound delegated auth as part of the same coherent system, or whether each new surface requires its own product, its own configuration, and its own coverage boundary.

That's where teams building B2B AI apps start to feel the friction.

What Frontegg Built, and How It Extended

Frontegg's core platform is genuinely org-native. The data model is Tenant → users and groups → tenant-aware JWTs. Org context lives natively in tokens. RBAC is defined per tenant. Session policy is configurable per tenant. The admin portal gives end-customers self-service control over their own org's identity settings. For the user-facing product surface, this is a complete and well-executed system.

When AI surfaces arrived, Frontegg extended the platform additively. Agent auth: outbound, delegated, acting-on-behalf-of — is handled through Frontegg[ai], a separate product for agentic workflows but you have to build agents on it too. Inbound MCP auth, for SaaS companies that want to expose their product to external AI clients like Claude, ChatGPT, or Gemini, is handled through AgentLink, a separate product with a hosted MCP server, fine-grained Agent IAM, step-up auth, and human-in-the-loop approvals.

Each product addresses a real surface. The question isn't whether they work it's what the coverage boundaries are, and what it costs to operate them as a stack.

Recommended: When to build auth in your B2B apps?

Three Places the Friction Accumulates

The coverage boundary on MCP auth

AgentLink is built for SaaS companies that want to expose their APIs to AI clients through Frontegg's infrastructure, sort of a gateway. It works well within that scope. The boundary appears when the AI client wasn't built using Frontegg or when you want to expose a standard MCP endpoint that any compliant AI client can discover and authenticate against, without routing through AgentLink specifically.

AgentLink is Frontegg's hosted MCP gateway — a managed infrastructure layer that sits between your product and the AI clients connecting to it. For teams that want a fully managed MCP server with Agent IAM, step-up auth, and human-in-the-loop approvals built in, that's a real offering. The constraint is architectural: AgentLink has to be in place for MCP auth to work. There's no standalone MCP auth — no way to protect an MCP server you control directly, running on your own infrastructure, without routing through AgentLink. If your architecture calls for an MCP endpoint you own end-to-end, or if you want to add auth to an existing MCP server without adopting a managed gateway, that path isn't available.

For teams building MCP servers expect arbitrary external AI clients to connect to, that boundary matters.

Recommended: API authentication in B2B SaaS – Methods and best practices

The stack problem

Even within Frontegg's own ecosystem, agent auth (Frontegg.ai), MCP auth (AgentLink), and the core platform are separate product surfaces. Configuration, policy, and visibility aren't unified across them. When an agent acts on behalf of a user inside an org, the delegation pattern at the heart of most B2B AI features that relationship has to be expressed across products rather than as a native concept in a shared token model.

This isn't unique friction. Every platform that extended to AI features after being built for human-facing auth faces some version of it. What it adds up to, operationally, is an identity layer that requires active coordination to stay coherent as the product surface grows.

Modularity, or the lack of it

Frontegg is designed as a full-stack adoption: user auth, admin portal, SCIM, RBAC, and the AI products all within the same ecosystem. That's the right choice for teams starting from scratch who want to ship a complete identity layer quickly.

It becomes a constraint for teams who already have user auth in place — Auth0, Cognito, a custom system — and want to introduce enterprise SSO, agent auth, or MCP auth without replacing what's working. Frontegg's architecture assumes full-stack adoption. Bolting on just the enterprise auth layer, or just the agent auth layer, on top of a different primary auth system isn't a documented path.

For teams evaluating a partial adoption — start with SSO, layer on agent auth later, leave the login surface alone — that coupling is worth understanding before committing.

Recommended Reading: Frontegg alternatives for you in 2026

What a Unified Model Looks Like

The three friction points share a common shape: they're all symptoms of a platform that expanded to cover new surfaces by adding products rather than by extending a unified primitive.

The alternative is an identity model where the organization is the root object, and every actor type — user, agent, M2M client, MCP client — is resolved natively within that org context from the start. Not as an extension. Not through a separate product. As part of the same data model, the same token structure, the same policy layer.

In that model, when an agent acts on behalf of a user inside an org, the delegation is expressed in the token, not reconstructed by coordinating across products. When an external AI client authenticates to your MCP server, it does so through the same org-aware policy that governs users and agents and because the auth layer implements open standards, any compliant client can connect without a platform-specific dependency.

The rows where the gap is most meaningful: MCP client coverage, modularity of enterprise auth adoption, and whether the identity layer is itself a first-class API surface.

How Scalekit Is Built?

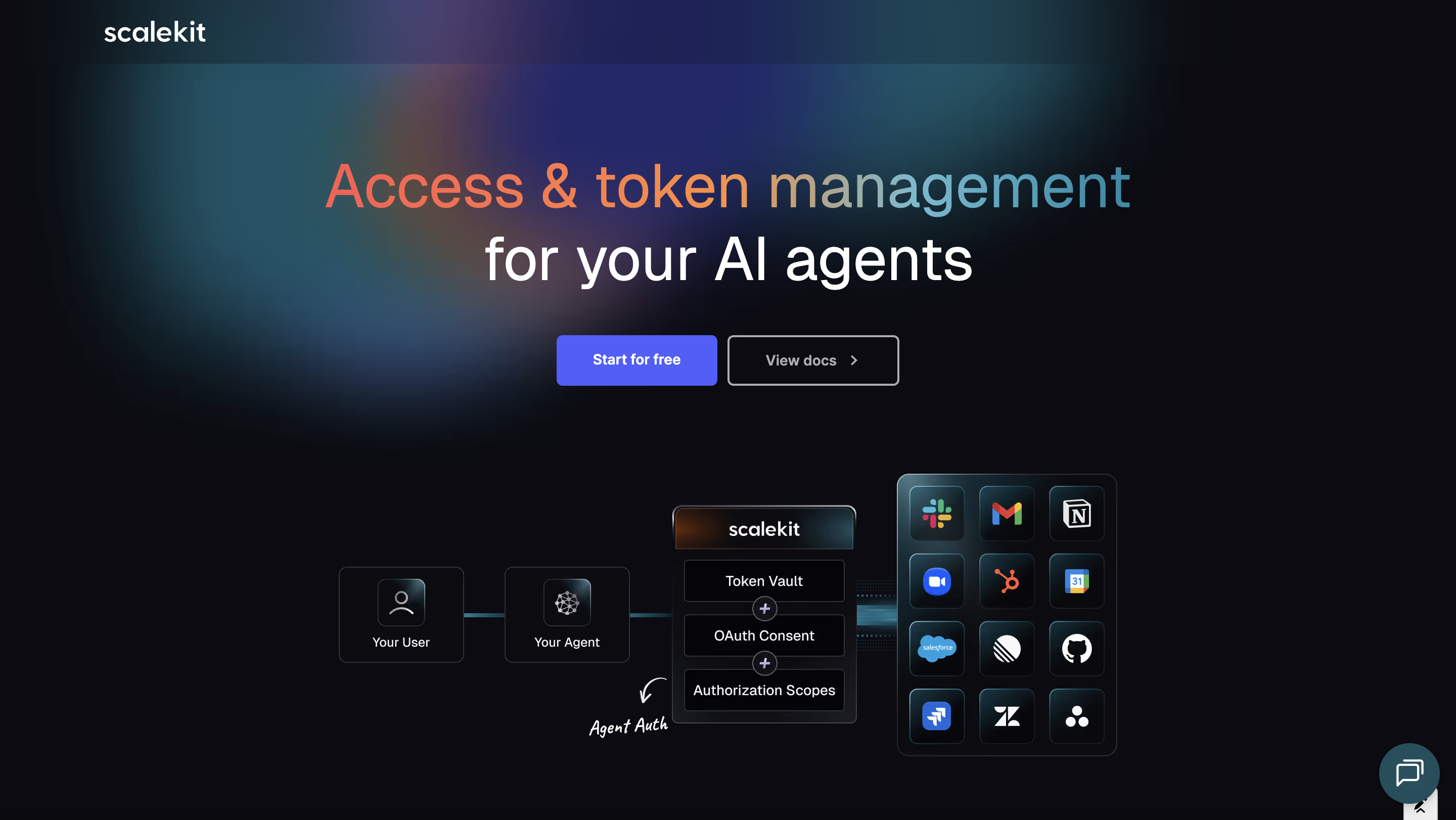

Scalekit starts from the same org-native premise as Frontegg. Organization as the root object, org context in every token but extends it to cover agents and MCP clients within the same model rather than through separate products.

Agent auth (delegated, acting-on-behalf-of) and inbound MCP auth aren't product add-ons in Scalekit. They're the same identity layer applied to different actor types. The MCP auth implementation uses CIMD and dynamic client discovery, which means any standard MCP-compliant AI client can authenticate Claude, ChatGPT, Gemini, or anything built to the spec without any dependency on Scalekit's own agent stack.

The enterprise auth layer is also designed for modular adoption. Scalekit can be introduced alongside an existing auth provider — Auth0, Cognito, a custom system — handling SSO, SCIM, and agent auth without replacing production login infrastructure. Teams that need to move carefully around existing systems can adopt capability by capability.

On the MCP server: Scalekit ships its own MCP server, and that detail is worth unpacking. Practically, it means developers can configure org settings, test SSO connections, and inspect provisioning events directly from Cursor, Windsurf, or any MCP-compatible IDE — no context switch to a separate dashboard. Architecturally, it signals something about how the platform treats identity: when the identity layer is itself a first-class API surface that implements the same standards it exposes to customers, it tends to stay coherent as those standards evolve. A platform that eats its own auth story is a different category of thing than one that manages auth through a UI.

The Question Worth Asking Now

The question in 2026 isn't whether your identity platform handles orgs and users well. Most platforms do. The question is whether it handles agents, MCP clients, and outbound delegated auth within the same coherent model as users and orgs or whether each new AI surface requires another product in the stack, another coverage boundary to reason about, and another seam to maintain.

For teams where AI is a feature, the additive model may be sufficient. For teams where AI is becoming the product's primary value surface, the seams become load-bearing earlier than expected.

FAQs

Is Frontegg unsuitable for B2B AI apps?

Not categorically. For teams whose AI features stay within the Frontegg ecosystem — agents built through Frontegg.ai, MCP clients connecting through AgentLink — the coverage is real. The friction appears at the boundaries: agents built on other frameworks, external AI clients that need to connect through standard MCP endpoints rather than AgentLink specifically, or teams that want to adopt enterprise auth capabilities without migrating their full auth stack to Frontegg.

Does adopting Scalekit mean replacing Frontegg?

No. Scalekit is designed for modular adoption alongside an existing auth provider. The typical path is to introduce Scalekit for enterprise SSO and SCIM first connection-based pricing is substantially lower at scale, then layer in agent auth and MCP auth as those surfaces mature. Frontegg can continue handling user login, the admin portal, and TOTP/SMS MFA where those are required.

What's the practical difference between AgentLink and CIMD-based MCP auth?

AgentLink provides a complete hosted MCP infrastructure server, Agent IAM, step-up auth, human-in-the-loop approvals, agent analytics — for AI clients that connect through Frontegg's system. CIMD-based auth implements open standards that let any compliant AI client discover and authenticate to your MCP endpoint without routing through a specific provider's infrastructure. The tradeoff: AgentLink gives you more built-in tooling for the clients it supports; CIMD gives you broader coverage by default for the open MCP ecosystem.

Why does Scalekit having its own MCP server matter?

Two reasons. Practically, it means developers can manage identity configuration from inside the development environment: same tools, no context switch. Architecturally, a platform that implements the same MCP standards it exposes to customers treats identity as a first-class API surface rather than a UI-first product. That distinction tends to matter more as the AI surfaces in the product grow.

.webp)

.webp)